- Multiple comparisons

-

In statistics, the multiple comparisons or multiple testing problem occurs when one considers a set of statistical inferences simultaneously.[1] Errors in inference, including confidence intervals that fail to include their corresponding population parameters or hypothesis tests that incorrectly reject the null hypothesis are more likely to occur when one considers the set as a whole. Several statistical techniques have been developed to prevent this from happening, allowing significance levels for single and multiple comparisons to be directly compared. These techniques generally require a stronger level of evidence to be observed in order for an individual comparison to be deemed "significant", so as to compensate for the number of inferences being made.

Contents

Practical examples

The term "comparisons" in multiple comparisons typically refers to comparisons of two groups, such as a treatment group and a control group. "Multiple comparisons" arise when a statistical analysis encompasses a number of formal comparisons, with the presumption that attention will focus on the strongest differences among all comparisons that are made. Failure to compensate for multiple comparisons can have important real-world consequences, as illustrated by the following examples.

- Suppose the treatment is a new way of teaching writing to students, and the control is the standard way of teaching writing. Students in the two groups can be compared in terms of grammar, spelling, organization, content, and so on. As more attributes are compared, it becomes more likely that the treatment and control groups will appear to differ on at least one attribute.

- Suppose we consider the efficacy of a drug in terms of the reduction of any one of a number of disease symptoms. As more symptoms are considered, it becomes more likely that the drug will appear to be an improvement over existing drugs in terms of at least one symptom.

- Suppose we consider the safety of a drug in terms of the occurrences of different types of side effects. As more types of side effects are considered, it becomes more likely that the new drug will appear to be less safe than existing drugs in terms of at least one side effect.

In all three examples, as the number of comparisons increases, it becomes more likely that the groups being compared will appear to differ in terms of at least one attribute. However a difference between the groups is only meaningful if it generalizes to an independent sample of data (e.g. to an independent set of people treated with the same drug). Our confidence that a result will generalize to independent data should generally be weaker if it is observed as part of an analysis that involves multiple comparisons, rather than an analysis that involves only a single comparison.

Confidence intervals and hypothesis tests

The family of statistical inferences that occur in a multiple comparisons analysis can comprise confidence intervals, hypothesis tests, or both in combination.

To illustrate the issue in terms of confidence intervals, note that a single confidence interval with 95% coverage probability level will likely contain the population parameter it is meant to contain, i.e. in the long run 95% of confidence intervals built in that way will contain the true population parameter. However, if one considers 100 confidence intervals simultaneously, with coverage probability 0.95 each, it is highly likely that at least one interval will not contain its population parameter. The expected number of such non-covering intervals is 5, and if the intervals are independent, the probability that at least one interval does not contain the population parameter is 99.4%.

If the inferences are hypothesis tests rather than confidence intervals, the same issue arises. With just one test performed at the 5% level, there is only a 5% chance of incorrectly rejecting the null hypothesis if the null hypothesis is true. However, for 100 tests where all null hypotheses are true, the expected number of incorrect rejections is 5. If the tests are independent, the probability of at least one incorrect rejection is 99.4%. These errors are called false positives.

Techniques have been developed to control the false positive error rate associated with performing multiple statistical tests. Similarly, techniques have been developed to adjust confidence intervals so that the probability of at least one of the intervals not covering its target value is controlled.

Example: Flipping coins

For example, one might declare that a coin was biased if in 10 flips it landed heads at least 9 times. Indeed, if one assumes as a null hypothesis that the coin is fair, then the probability that a fair coin would come up heads at least 9 out of 10 times is (10 + 1) × (1/2)10 = 0.0107. This is relatively unlikely, and under statistical criteria such as p-value < 0.05, one would declare that the null hypothesis should be rejected — i.e., the coin is unfair.

A multiple-comparisons problem arises if one wanted to use this test (which is appropriate for testing the fairness of a single coin), to test the fairness of many coins. Imagine if one was to test 100 fair coins by this method. Given that the probability of a fair coin coming up 9 or 10 heads in 10 flips is 0.0107, one would expect that in flipping 100 fair coins ten times each, to see a particular (i.e., pre-selected) coin come up heads 9 or 10 times would still be very unlikely, but seeing any coin behave that way, without concern for which one, would be more likely than not. Precisely, the likelihood that all 100 fair coins are identified as fair by this criterion is (1 − 0.0107)100 ≈ 0.34. Therefore the application of our single-test coin-fairness criterion to multiple comparisons would be more likely to falsely identify at least one fair coin as unfair.

Formalism

For hypothesis testing, the problem of multiple comparisons (also known as the multiple testing problem) results from the increase in type I error that occurs when statistical tests are used repeatedly. If n independent comparisons are performed, the experiment-wide significance level α, also termed FWER for familywise error rate, is given by

.

.

Hence, unless the tests are perfectly dependent, α increases as the number of comparisons increases. If we do not assume that the comparisons are independent, then we can still say:

which follows from Boole's inequality. Example:

You can use this result to assure that the familywise error rate is at most

by setting

by setting  . This highly conservative method is known as the Bonferroni correction. A more sensitive correction can be obtained by solving the equation for the familywise error rate of n independent comparisons for

. This highly conservative method is known as the Bonferroni correction. A more sensitive correction can be obtained by solving the equation for the familywise error rate of n independent comparisons for  . This yields

. This yields  , which is known as the Šidák correction.

, which is known as the Šidák correction.Methods

Multiple testing correction refers to re-calculating probabilities obtained from a statistical test which was repeated multiple times. In order to retain a prescribed familywise error rate α in an analysis involving more than one comparison, the error rate for each comparison must be more stringent than α. Boole's inequality implies that if each test is performed to have type I error rate α/n, the total error rate will not exceed α. This is called the Bonferroni correction, and is one of the most commonly used approaches for multiple comparisons.

In some situations, the Bonferroni correction is substantially conservative, i.e., the actual familywise error rate is much less than the prescribed level α. This occurs when the test statistics are highly dependent (in the extreme case where the tests are perfectly dependent, the familywise error rate with no multiple comparisons adjustment and the per-test error rates are identical). For example, in fMRI analysis[2][3], tests are done on over 100000 voxels in the brain. The Bonferroni method would require p-values to be smaller than .05/100000 to declare significance. Since adjacent voxels tend to be highly correlated, this threshold is generally too stringent.

Because simple techniques such as the Bonferroni method can be too conservative, there has been a great deal of attention paid to developing better techniques, such that the overall rate of false positives can be maintained without inflating the rate of false negatives unnecessarily. Such methods can be divided into general categories:

- Methods where total alpha can be proved to never exceed 0.05 (or some other chosen value) under any conditions. These methods provide "strong" control against Type I error, in all conditions including a partially correct null hypothesis.

- Methods where total alpha can be proved not to exceed 0.05 except under certain defined conditions.

- Methods which rely on an omnibus test before proceeding to multiple comparisons. Typically these methods require a significant ANOVA/Tukey's range test before proceeding to multiple comparisons. These methods have "weak" control of Type I error.

- Empirical methods, which control the proportion of Type I errors adaptively, utilizing correlation and distribution characteristics of the observed data.

The advent of computerized resampling methods, such as bootstrapping and Monte Carlo simulations, has given rise to many techniques in the latter category. In some cases where exhaustive permutation resampling is performed, these tests provide exact, strong control of Type I error rates; in other cases, such as bootstrap sampling, they provide only approximate control.

Post-hoc testing of ANOVAs

Multiple comparison procedures are commonly used in an analysis of variance after obtaining a significant omnibus test result, like the ANOVA F-test. The significant ANOVA result suggests rejecting the global null hypothesis H0 that the means are the same across the groups being compared. Multiple comparison procedures are then used to determine which means differ. In a one-way ANOVA involving K group means, there are K(K − 1)/2 pairwise comparisons.

A number of methods have been proposed for this problem, some of which are:

- Single-step procedures

- Tukey–Kramer method (Tukey's HSD) (1951)

- Scheffe method (1953)

- Multi-step procedures based on Studentized range statistic

- Duncan's new multiple range test (1955)

- The Nemenyi test is similar to Tukey's range test in ANOVA.

- The Bonferroni–Dunn test allows comparisons, controlling the familywise error rate.[vague]

- Student Newman-Keuls post-hoc analysis

If the variances of the groups being compared are similar, the Tukey–Kramer method is generally viewed as performing optimally or near-optimally in a broad variety of circumstances.[4] The situation where the variance of the groups being compared differ is more complex, and different methods perform well in different circumstances.

The Kruskal–Wallis test is the non-parametric alternative to ANOVA. Multiple comparisons can be done using pairwise comparisons (for example using Wilcoxon rank sum tests) and using a correction to determine if the post-hoc tests are significant (for example a Bonferroni correction).

Large-scale multiple testing

Traditional methods for multiple comparisons adjustments focus on correcting for modest numbers of comparisons, often in an analysis of variance. A different set of techniques have been developed for "large-scale multiple testing," in which thousands or even greater numbers of tests are performed. For example, in genomics, when using technologies such as microarrays, expression levels of tens of thousands of genes can be measured, and genotypes for millions of genetic markers can be measured. Particularly in the field of genetic association studies, there has been a serious problem with non-replication — a result being strongly statistically significant in one study but failing to be replicated in a follow-up study. Such non-replication can have many causes, but it is widely considered that failure to fully account for the consequences of making multiple comparisons is one of the causes.

In different branches of science, multiple testing is handled in different ways. It has been argued that if statistical tests are only performed when there is a strong basis for expecting the result to be true, multiple comparisons adjustments are not necessary.[5] It has also been argued that use of multiple testing corrections is an inefficient way to perform empirical research, since multiple testing adjustments control false positives at the potential expense of many more false negatives. On the other hand, it has been argued that advances in measurement and information technology have made it far easier to generate large datasets for exploratory analysis, often leading to the testing of large numbers of hypotheses with no prior basis for expecting many of the hypotheses to be true. In this situation, very high false positive rates are expected unless multiple comparisons adjustments are made.[6]

For large-scale testing problems where the goal is to provide definitive results, the familywise error rate remains the most accepted parameter for ascribing significance levels to statistical tests. Alternatively, if a study is viewed as exploratory, or if significant results can be easily re-tested in an independent study, control of the false discovery rate (FDR)[7][8][9] is often preferred. The FDR, defined as the expected proportion of false positives among all significant tests, allows researchers to identify a set of "candidate positives," of which a high proportion are likely to be true. The false positives within the candidate set can then be identified in a follow-up study.

Assessing whether any alternative hypotheses are true

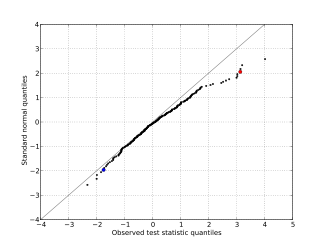

A normal quantile plot for a simulated set of test statistics that have been standardized to be Z-scores under the null hypothesis. The departure of the upper tail of the distribution from the expected trend along the diagonal is due to the presence of substantially more large test statistic values than would be expected if all null hypotheses were true. The red point corresponds to the fourth largest observed test statistic, which is 3.13, versus an expected value of 2.06. The blue point corresponds to the fifth smallest test statistic, which is -1.75, versus an expected value of -1.96. The graph suggests that it is unlikely that all the null hypotheses are true, and that most or all instances of a true alternative hypothesis result from deviations in the positive direction.

A normal quantile plot for a simulated set of test statistics that have been standardized to be Z-scores under the null hypothesis. The departure of the upper tail of the distribution from the expected trend along the diagonal is due to the presence of substantially more large test statistic values than would be expected if all null hypotheses were true. The red point corresponds to the fourth largest observed test statistic, which is 3.13, versus an expected value of 2.06. The blue point corresponds to the fifth smallest test statistic, which is -1.75, versus an expected value of -1.96. The graph suggests that it is unlikely that all the null hypotheses are true, and that most or all instances of a true alternative hypothesis result from deviations in the positive direction.

A basic question faced at the outset of analyzing a large set of testing results is whether there is evidence that any of the alternative hypotheses are true. One simple meta-test that can be applied when it is assumed that the tests are independent of each other is to use the Poisson distribution as a model for the number of significant results at a given level α that would be found when all null hypotheses are true. If the observed number of positives is substantially greater than what should be expected, this suggests that there are likely to be some true positives among the significant results. For example, if 1000 independent tests are performed, each at level α = 0.05, we expect 50 significant tests to occur when all null hypotheses are true. Based on the Poisson distribution with mean 50, the probability of observing more than 61 significant tests is less than 0.05, so if we observe more than 61 significant results, it is very likely that some of them correspond to situations where the alternative hypothesis holds. A drawback of this approach is that it over-states the evidence that some of the alternative hypotheses are true when the test statistics are positively correlated, which commonly occurs in practice.

Another common approach that can be used in situations where the test statistics can be standardized to Z-scores is to make a normal quantile plot of the test statistics. If the observed quantiles are markedly more dispersed than the normal quantiles, this suggests that some of the significant results may be true positives.

See also

- Key concepts

- Familywise error rate

- False positive rate

- False discovery rate (FDR)

- Post-hoc analysis

- Experimentwise error rate

- General methods of alpha adjustment for multiple comparisons

- Closed testing procedure

- Bonferroni correction

- Boole–Bonferroni bound

- Holm–Bonferroni method

- Westfall–Young step-down approach of Westfall and Young

- method of Benjamini and Hochberg

- Testing hypotheses suggested by the data

References

- ^ Miller, R.G. (1981). Simultaneous Statistical Inference 2nd Ed. Springer Verlag New York. ISBN ISBN 0-387-90548-0.

- ^ Logan and Rowe (2004). An evaluation of thresholding techniques in fMRI analysis. Neuroimage 22(1):95-108.

- ^ Logan, Geliazkova, and Rowe (2008). An Evaluation of spatial thresholding techniques in fMRI analysis. Human Brain Mappping 29(12):1379-1389.

- ^ Stoline, Michael R. (1981). "The Status of Multiple Comparisons: Simultaneous Estimation of All Pairwise Comparisons in One-Way ANOVA Designs". The American Statistician (American Statistical Association) 35 (3): 134–141. doi:10.2307/2683979. JSTOR 2683979.

- ^ Rothman, Kenneth J. (1990). "No Adjustments Are Needed for Multiple Comparisons". Epidemiology (Lippincott Williams & Wilkins) 1 (1): 43–46. doi:10.1097/00001648-199001000-00010. JSTOR 20065622. PMID 2081237.

- ^ Ioannidis, JPA (2005). "Why Most Published Research Findings Are False". PLoS Med 2 (8): e124. doi:10.1371/journal.pmed.0020124. PMC 1182327. PMID 16060722. http://www.pubmedcentral.nih.gov/articlerender.fcgi?tool=pmcentrez&artid=1182327.

- ^ Benjamini, Yoav; Hochberg, Yosef (1995). "Controlling the false discovery rate: a practical and powerful approach to multiple testing". Journal of the Royal Statistical Society, Series B (Methodological) (Blackwell Publishing) 57 (1): 125–133. JSTOR 2346101.

- ^ Storey, JD; Tibshirani, Robert (2003). "Statistical significance for genome-wide studies". PNAS 100 (16): 9440–9445. doi:10.1073/pnas.1530509100. JSTOR 3144228. PMC 170937. PMID 12883005. http://www.pubmedcentral.nih.gov/articlerender.fcgi?tool=pmcentrez&artid=170937.

- ^ Efron, Bradley; Tibshirani, Robert; Storey, John D.; Tusher,Virginia (2001). "Empirical Bayes analysis of a microarray experiment". Journal of the American Statistical Association 96 (456): 1151–1160. doi:10.1198/016214501753382129. JSTOR 3085878.

Statistics Descriptive statistics Summary tablesPearson product-moment correlation · Rank correlation (Spearman's rho, Kendall's tau) · Partial correlation · Scatter plotBar chart · Biplot · Box plot · Control chart · Correlogram · Forest plot · Histogram · Q-Q plot · Run chart · Scatter plot · Stemplot · Radar chartData collection Designing studiesDesign of experiments · Factorial experiment · Randomized experiment · Random assignment · Replication · Blocking · Optimal designUncontrolled studiesStatistical inference Frequentist inferenceSpecific testsZ-test (normal) · Student's t-test · F-test · Pearson's chi-squared test · Wald test · Mann–Whitney U · Shapiro–Wilk · Signed-rank · Kolmogorov–Smirnov testCorrelation and regression analysis Errors and residuals · Regression model validation · Mixed effects models · Simultaneous equations modelsNon-standard predictorsPartition of varianceCategorical, multivariate, time-series, or survival analysis Decomposition (Trend · Stationary process) · ARMA model · ARIMA model · Vector autoregression · Spectral density estimationApplications Categories:- Hypothesis testing

- Multiple comparisons

Wikimedia Foundation. 2010.