- Kalman filter

-

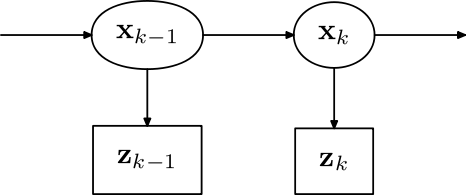

Roles of the variables in the Kalman filter. (Larger image here)

Roles of the variables in the Kalman filter. (Larger image here)

In statistics, the Kalman filter is a mathematical method named after Rudolf E. Kálmán. Its purpose is to use measurements observed over time, containing noise (random variations) and other inaccuracies, and produce values that tend to be closer to the true values of the measurements and their associated calculated values. The Kalman filter has many applications in technology, and is an essential part of space and military technology development. A very simple example and perhaps the most commonly used type of Kalman filter is the phase-locked loop, which is now ubiquitous in FM radios and most electronic communications equipment.[citation needed] Extensions and generalizations to the method have also been developed.

The Kalman filter produces estimates of the true values of measurements and their associated calculated values by predicting a value, estimating the uncertainty of the predicted value, and computing a weighted average of the predicted value and the measured value. The most weight is given to the value with the least uncertainty. The estimates produced by the method tend to be closer to the true values than the original measurements because the weighted average has a better estimated uncertainty than either of the values that went into the weighted average.

From a theoretical standpoint, the Kalman filter is an algorithm for efficiently doing exact inference in a linear dynamical system, which is a Bayesian model similar to a hidden Markov model but where the state space of the latent variables is continuous and where all latent and observed variables have a Gaussian distribution (often a multivariate Gaussian distribution).

Naming and historical development

The filter is named after Rudolf E. Kálmán, though Thorvald Nicolai Thiele[1][2] and Peter Swerling developed a similar algorithm earlier. Richard S. Bucy of the University of Southern California contributed to the theory, leading to it often being called the Kalman-Bucy filter. Stanley F. Schmidt is generally credited with developing the first implementation of a Kalman filter. It was during a visit by Kalman to the NASA Ames Research Center that he saw the applicability of his ideas to the problem of trajectory estimation for the Apollo program, leading to its incorporation in the Apollo navigation computer. This Kalman filter was first described and partially developed in technical papers by Swerling (1958), Kalman (1960) and Kalman and Bucy (1961).

Kalman filters have been vital in the implementation of the navigation systems of U.S. Navy nuclear ballistic missile submarines, and in the guidance and navigation systems of cruise missiles such as the U.S. Navy's Tomahawk missile and the U.S. Air Force's Air Launched Cruise Missile. It is also used in the guidance and navigation systems of the NASA Space Shuttle and the attitude control and navigation systems of the International Space Station.

This digital filter is sometimes called the Stratonovich–Kalman–Bucy filter because it is a special case of a more general, non-linear filter developed somewhat earlier by the Soviet mathematician Ruslan L. Stratonovich.[3][4][5][6] In fact, some of the special case linear filter's equations appeared in these papers by Stratonovich that were published before summer 1960, when Kalman met with Stratonovich during a conference in Moscow.

Overview of the calculation

The Kalman filter uses a system's dynamics model (i.e., physical laws of motion), known control inputs to that system, and measurements (such as from sensors) to form an estimate of the system's varying quantities (its state) that is better than the estimate obtained by using any one measurement alone. As such, it is a common sensor fusion algorithm.

All measurements and calculations based on models are estimates to some degree. Noisy sensor data, approximations in the equations that describe how a system changes, and external factors that are not accounted for introduce some uncertainty about the inferred values for a system's state. The Kalman filter averages a prediction of a system's state with a new measurement using a weighted average. The purpose of the weights is that values with better (i.e., smaller) estimated uncertainty are "trusted" more. The weights are calculated from the covariance, a measure of the estimated uncertainty of the prediction of the system's state. The result of the weighted average is a new state estimate that lies in between the predicted and measured state, and has a better estimated uncertainty than either alone. This process is repeated every time step, with the new estimate and its covariance informing the prediction used in the following iteration. This means that the Kalman filter works recursively and requires only the last "best guess" - not the entire history - of a system's state to calculate a new state.

When performing the actual calculations for the filter (as discussed below), the state estimate and covariances are coded into matrices to handle the multiple dimensions involved in a single set of calculations. This allows for representation of linear relationships between different state variables (such as position, velocity, and acceleration) in any of the transition models or covariances.

Example application

The Kalman filter is used in sensor fusion and data fusion. Typically, real-time systems produce multiple sequential measurements rather than making a single measurement to obtain the state of the system. These multiple measurements are then combined mathematically to generate the system's state at that time instant.

As an example application, consider the problem of determining the precise location of a truck. The truck can be equipped with a GPS unit that provides an estimate of the position within a few meters. The GPS estimate is likely to be noisy; readings 'jump around' rapidly, though always remaining within a few meters of the real position. The truck's position can also be estimated by integrating its speed and direction over time, determined by keeping track of wheel revolutions and the angle of the steering wheel. This is a technique known as dead reckoning. Typically, dead reckoning will provide a very smooth estimate of the truck's position, but it will drift over time as small errors accumulate. Additionally, the truck is expected to follow the laws of physics, so its position should be expected to change proportionally to its velocity.

In this example, the Kalman filter can be thought of as operating in two distinct phases: predict and update. In the prediction phase, the truck's old position will be modified according to the physical laws of motion (the dynamic or "state transition" model) plus any changes produced by the accelerator pedal and steering wheel. Not only will a new position estimate be calculated, but a new covariance will be calculated as well. Perhaps the covariance is proportional to the speed of the truck because we are more uncertain about the accuracy of the dead reckoning estimate at high speeds but very certain about the position when moving slowly. Next, in the update phase, a measurement of the truck's position is taken from the GPS unit. Along with this measurement comes some amount of uncertainty, and its covariance relative to that of the prediction from the previous phase determines how much the new measurement will affect the updated prediction. Ideally, if the dead reckoning estimates tend to drift away from the real position, the GPS measurement should pull the position estimate back towards the real position but not disturb it to the point of becoming rapidly changing and noisy.

Kalman filter in computer vision

Data fusion using a Kalman filter can assist computers to track objects in videos with low latency (not to be confused with a low number of latent variables). The tracking of objects is a dynamic problem, using data from sensor and camera images that always suffer from noise. This can sometimes be reduced by using higher quality cameras and sensors but can never be eliminated, so it is often desirable to use a noise reduction method.

The iterative predictor-corrector nature of the Kalman filter can be helpful, because at each time instance only one constraint on the state variable need be considered. This process is repeated, considering a different constraint at every time instance. All the measured data are accumulated over time and help in predicting the state.

Video can also be pre-processed, perhaps using a segmentation technique, to reduce the computation and hence latency.

Technical description and context

The Kalman filter is an efficient recursive filter that estimates the internal state of a linear dynamic system from a series of noisy measurements. It is used in a wide range of engineering and econometric applications from radar and computer vision to estimation of structural macroeconomic models,[7][8] and is an important topic in control theory and control systems engineering. Together with the linear-quadratic regulator (LQR), the Kalman filter solves the linear-quadratic-Gaussian control problem (LQG). The Kalman filter, the linear-quadratic regulator and the linear-quadratic-Gaussian controller are solutions to what probably are the most fundamental problems in control theory.

In most applications, the internal state is much larger (more degrees of freedom) than the few "observable" parameters which are measured. However, by combining a series of measurements, the Kalman filter can estimate the entire internal state.

In control theory, the Kalman filter is most commonly referred to as linear quadratic estimation (LQE).

In Dempster-Shafer theory, each state equation or observation is considered a special case of a Linear belief function and the Kalman filter is a special case of combining linear belief functions on a join-tree or Markov tree.

A wide variety of Kalman filters have now been developed, from Kalman's original formulation, now called the "simple" Kalman filter, the Kalman-Bucy filter, Schmidt's "extended" filter, the "information" filter, and a variety of "square-root" filters that were developed by Bierman, Thornton and many others. Perhaps the most commonly used type of very simple Kalman filter is the phase-locked loop, which is now ubiquitous in radios, especially frequency modulation (FM) radios, television sets, satellite communications receivers, outer space communications systems, and nearly any other electronic communications equipment.

Underlying dynamic system model

Kalman filters are based on linear dynamic systems discretized in the time domain. They are modelled on a Markov chain built on linear operators perturbed by Gaussian noise. The state of the system is represented as a vector of real numbers. At each discrete time increment, a linear operator is applied to the state to generate the new state, with some noise mixed in, and optionally some information from the controls on the system if they are known. Then, another linear operator mixed with more noise generates the observed outputs from the true ("hidden") state. The Kalman filter may be regarded as analogous to the hidden Markov model, with the key difference that the hidden state variables take values in a continuous space (as opposed to a discrete state space as in the hidden Markov model). Additionally, the hidden Markov model can represent an arbitrary distribution for the next value of the state variables, in contrast to the Gaussian noise model that is used for the Kalman filter. There is a strong duality between the equations of the Kalman Filter and those of the hidden Markov model. A review of this and other models is given in Roweis and Ghahramani (1999)[9] and Hamilton (1994), Chapter 13.[10]

In order to use the Kalman filter to estimate the internal state of a process given only a sequence of noisy observations, one must model the process in accordance with the framework of the Kalman filter. This means specifying the following matrices: Fk, the state-transition model; Hk, the observation model; Qk, the covariance of the process noise; Rk, the covariance of the observation noise; and sometimes Bk, the control-input model, for each time-step, k, as described below.

Model underlying the Kalman filter. Squares represent matrices. Ellipses represent multivariate normal distributions (with the mean and covariance matrix enclosed). Unenclosed values are vectors. In the simple case, the various matrices are constant with time, and thus the subscripts are dropped, but the Kalman filter allows any of them to change each time step.

Model underlying the Kalman filter. Squares represent matrices. Ellipses represent multivariate normal distributions (with the mean and covariance matrix enclosed). Unenclosed values are vectors. In the simple case, the various matrices are constant with time, and thus the subscripts are dropped, but the Kalman filter allows any of them to change each time step.

The Kalman filter model assumes the true state at time k is evolved from the state at (k − 1) according to

where

- Fk is the state transition model which is applied to the previous state xk−1;

- Bk is the control-input model which is applied to the control vector uk;

- wk is the process noise which is assumed to be drawn from a zero mean multivariate normal distribution with covariance Qk.

At time k an observation (or measurement) zk of the true state xk is made according to

where Hk is the observation model which maps the true state space into the observed space and vk is the observation noise which is assumed to be zero mean Gaussian white noise with covariance Rk.

The initial state, and the noise vectors at each step {x0, w1, ..., wk, v1 ... vk} are all assumed to be mutually independent.

Many real dynamical systems do not exactly fit this model. In fact, unmodelled dynamics can seriously degrade the filter performance, even when it was supposed to work with unknown stochastic signals as inputs. The reason for this is that the effect of unmodelled dynamics depends on the input, and, therefore, can bring the estimation algorithm to instability (it diverges). On the other hand, independent white noise signals will not make the algorithm diverge. The problem of separating between measurement noise and unmodelled dynamics is a difficult one and is treated in control theory under the framework of robust control.

The Kalman filter

The Kalman filter is a recursive estimator. This means that only the estimated state from the previous time step and the current measurement are needed to compute the estimate for the current state. In contrast to batch estimation techniques, no history of observations and/or estimates is required. In what follows, the notation

represents the estimate of

represents the estimate of  at time n given observations up to, and including at time m.

at time n given observations up to, and including at time m.The state of the filter is represented by two variables:

, the a posteriori state estimate at time k given observations up to and including at time k;

, the a posteriori state estimate at time k given observations up to and including at time k; , the a posteriori error covariance matrix (a measure of the estimated accuracy of the state estimate).

, the a posteriori error covariance matrix (a measure of the estimated accuracy of the state estimate).

The Kalman filter can be written as a single equation, however it is most often conceptualized as two distinct phases: "Predict" and "Update". The predict phase uses the state estimate from the previous timestep to produce an estimate of the state at the current timestep. This predicted state estimate is also known as the a priori state estimate because, although it is an estimate of the state at the current timestep, it does not include observation information from the current timestep. In the update phase, the current a priori prediction is combined with current observation information to refine the state estimate. This improved estimate is termed the a posteriori state estimate.

Typically, the two phases alternate, with the prediction advancing the state until the next scheduled observation, and the update incorporating the observation. However, this is not necessary; if an observation is unavailable for some reason, the update may be skipped and multiple prediction steps performed. Likewise, if multiple independent observations are available at the same time, multiple update steps may be performed (typically with different observation matrices Hk).

Predict

Predicted (a priori) state estimate

Predicted (a priori) estimate covariance

Update

Innovation or measurement residual

Innovation (or residual) covariance

Optimal Kalman gain

Updated (a posteriori) state estimate

Updated (a posteriori) estimate covariance

The formula for the updated estimate and covariance above is only valid for the optimal Kalman gain. Usage of other gain values require a more complex formula found in the derivations section.

Invariants

If the model is accurate, and the values for

and

and  accurately reflect the distribution of the initial state values, then the following invariants are preserved: (all estimates have a mean error of zero)

accurately reflect the distribution of the initial state values, then the following invariants are preserved: (all estimates have a mean error of zero)where E[ξ] is the expected value of ξ, and covariance matrices accurately reflect the covariance of estimates

Estimation of the noise covariances Qk and Rk

Practical implementation of the Kalman Filter is often difficult due to the inability in getting a good estimate of the noise covariance matrices Qk and Rk. Extensive research has been done in this field to estimate these covariances from data. One of the more promising approaches to doing this is called the Autocovariance Least-Squares (ALS) technique that uses autocovariances of routine operating data to estimate the covariances.[11][12] The GNU Octave code used to calculate the noise covariance matrices using the ALS technique is available online under the GNU General Public License license.[13]

Example application, technical

Consider a truck on perfectly frictionless, infinitely long straight rails. Initially the truck is stationary at position 0, but it is buffeted this way and that by random acceleration. We measure the position of the truck every Δt seconds, but these measurements are imprecise; we want to maintain a model of where the truck is and what its velocity is. We show here how we derive the model from which we create our Kalman filter.

Since F, H, R and Q are constant, their time indices are dropped.

The position and velocity of the truck are described by the linear state space

where

is the velocity, that is, the derivative of position with respect to time.

is the velocity, that is, the derivative of position with respect to time.We assume that between the (k − 1) and k timestep the truck undergoes a constant acceleration of ak that is normally distributed, with mean 0 and standard deviation σa. From Newton's laws of motion we conclude that

(note that there is no

term since we have no known control inputs) where

term since we have no known control inputs) whereand

so that

where

and

andAt each time step, a noisy measurement of the true position of the truck is made. Let us suppose the measurement noise vk is also normally distributed, with mean 0 and standard deviation σz.

where

and

We know the initial starting state of the truck with perfect precision, so we initialize

and to tell the filter that we know the exact position, we give it a zero covariance matrix:

If the initial position and velocity are not known perfectly the covariance matrix should be initialized with a suitably large number, say L, on its diagonal.

The filter will then prefer the information from the first measurements over the information already in the model.

Derivations

Deriving the a posteriori estimate covariance matrix

Starting with our invariant on the error covariance Pk|k as above

substitute in the definition of

and substitute

and

and by collecting the error vectors we get

Since the measurement error vk is uncorrelated with the other terms, this becomes

by the properties of vector covariance this becomes

which, using our invariant on Pk|k-1 and the definition of Rk becomes

This formula (sometimes known as the "Joseph form" of the covariance update equation) is valid for any value of Kk. It turns out that if Kk is the optimal Kalman gain, this can be simplified further as shown below.

Kalman gain derivation

The Kalman filter is a minimum mean-square error estimator. The error in the a posteriori state estimation is

We seek to minimize the expected value of the square of the magnitude of this vector,

![\textrm{E}[|\textbf{x}_{k} - \hat{\textbf{x}}_{k|k}|^2]](4/3b40022edea8b7435f22199f63727849.png) . This is equivalent to minimizing the trace of the a posteriori estimate covariance matrix

. This is equivalent to minimizing the trace of the a posteriori estimate covariance matrix  . By expanding out the terms in the equation above and collecting, we get:

. By expanding out the terms in the equation above and collecting, we get:The trace is minimized when the matrix derivative is zero:

Solving this for Kk yields the Kalman gain:

This gain, which is known as the optimal Kalman gain, is the one that yields MMSE estimates when used.

Simplification of the a posteriori error covariance formula

The formula used to calculate the a posteriori error covariance can be simplified when the Kalman gain equals the optimal value derived above. Multiplying both sides of our Kalman gain formula on the right by SkKkT, it follows that

Referring back to our expanded formula for the a posteriori error covariance,

we find the last two terms cancel out, giving

This formula is computationally cheaper and thus nearly always used in practice, but is only correct for the optimal gain. If arithmetic precision is unusually low causing problems with numerical stability, or if a non-optimal Kalman gain is deliberately used, this simplification cannot be applied; the a posteriori error covariance formula as derived above must be used.

Sensitivity analysis

The Kalman filtering equations provide an estimate of the state

and its error covariance

and its error covariance  recursively. The estimate and its quality depend on the system parameters and the noise statistics fed as inputs to the estimator. This section analyzes the effect of uncertainties in the statistical inputs to the filter.[14] In the absence of reliable statistics or the true values of noise covariance matrices

recursively. The estimate and its quality depend on the system parameters and the noise statistics fed as inputs to the estimator. This section analyzes the effect of uncertainties in the statistical inputs to the filter.[14] In the absence of reliable statistics or the true values of noise covariance matrices  and

and  , the expression

, the expressionno longer provides the actual error covariance. In other words,

![\textbf{P}_{k|k} \neq E[(\textbf{x}_k - \hat{\textbf{x}}_{k|k})(\textbf{x}_k - \hat{\textbf{x}}_{k|k})^T]](3/7a3faee200d8f64a39e741e4c0d21960.png) . In most real time applications the covariance matrices that are used in designing the Kalman filter are different from the actual noise covariances matrices.[citation needed] This sensitivity analysis describes the behavior of the estimation error covariance when the noise covariances as well as the system matrices

. In most real time applications the covariance matrices that are used in designing the Kalman filter are different from the actual noise covariances matrices.[citation needed] This sensitivity analysis describes the behavior of the estimation error covariance when the noise covariances as well as the system matrices  and

and  that are fed as inputs to the filter are incorrect. Thus, the sensitivity analysis describes the robustness (or sensitivity) of the estimator to misspecified statistical and parametric inputs to the estimator.

that are fed as inputs to the filter are incorrect. Thus, the sensitivity analysis describes the robustness (or sensitivity) of the estimator to misspecified statistical and parametric inputs to the estimator.This discussion is limited to the error sensitivity analysis for the case of statistical uncertainties. Here the actual noise covariances are denoted by

and

and  respectively, whereas the design values used in the estimator are

respectively, whereas the design values used in the estimator are  and

and  respectively. The actual error covariance is denoted by

respectively. The actual error covariance is denoted by  and

and  as computed by the Kalman filter is referred to as the Riccati variable. When

as computed by the Kalman filter is referred to as the Riccati variable. When  and

and  , this means that

, this means that  . While computing the actual error covariance using

. While computing the actual error covariance using ![\textbf{P}_{k|k}^a = E[(\textbf{x}_k - \hat{\textbf{x}}_{k|k})(\textbf{x}_k - \hat{\textbf{x}}_{k|k})^T]](1/08122116063817c73d7c518cd5ab11c2.png) , substituting for

, substituting for  and using the fact that

and using the fact that ![E[\textbf{w}_k\textbf{w}_k^T] = \textbf{Q}_{k}^a](b/b7bcbf3ec6fe3c0aefa6708a2468297a.png) and

and ![E[\textbf{v}_k\textbf{v}_k^T] = \textbf{R}_{k}^a](b/22b55b8d817d3cd68160afef23b0aac6.png) , results in the following recursive equations for

, results in the following recursive equations for  :

:- While computing

, by design the filter implicitly assumes that

, by design the filter implicitly assumes that ![E[\textbf{w}_k\textbf{w}_k^T] = \textbf{Q}_{k}](e/37ed81b899b8e97ff611ad5330b106d0.png) and

and ![E[\textbf{v}_k\textbf{v}_k^T] = \textbf{R}_{k}](b/95b64c8bf322e1bddc0ab68bae8df751.png) .

. - The recursive expressions for

and

and  are identical except for the presence of

are identical except for the presence of  and

and  in place of the design values

in place of the design values  and

and  respectively.

respectively.

Square root form

One problem with the Kalman filter is its numerical stability. If the process noise covariance Qk is small, round-off error often causes a small positive eigenvalue to be computed as a negative number. This renders the numerical representation of the state covariance matrix P indefinite, while its true form is positive-definite.

Positive definite matrices have the property that they have a triangular matrix square root P = S·ST. This can be computed efficiently using the Cholesky factorization algorithm, but more importantly if the covariance is kept in this form, it can never have a negative diagonal or become asymmetric. An equivalent form, which avoids many of the square root operations required by the matrix square root yet preserves the desirable numerical properties, is the U-D decomposition form, P = U·D·UT, where U is a unit triangular matrix (with unit diagonal), and D is a diagonal matrix.

Between the two, the U-D factorization uses the same amount of storage, and somewhat less computation, and is the most commonly used square root form. (Early literature on the relative efficiency is somewhat misleading, as it assumed that square roots were much more time-consuming than divisions,[15]:69 while on 21-st century computers they are only slightly more expensive.)

Efficient algorithms for the Kalman prediction and update steps in the square root form were developed by G. J. Bierman and C. L. Thornton.[15][16]

The L·D·LT decomposition of the innovation covariance matrix Sk is the basis for another type of numerically efficient and robust square root filter.[17] The algorithm starts with the LU decomposition as implemented in the Linear Algebra PACKage (LAPACK). These results are further factored into the L·D·LT structure with methods given by Golub and Van Loan (algorithm 4.1.2) for a symmetric nonsingular matrix.[18] Any singular covariance matrix is pivoted so that the first diagonal partition is nonsingular and well-conditioned. The pivoting algorithm must retain any portion of the innovation covariance matrix directly corresponding to observed state-variables Hk·xk|k-1 that are associated with auxiliary observations in yk. The L·D·LT square-root filter requires orthogonalization of the observation vector.[16][17] This may be done with the inverse square-root of the covariance matrix for the auxiliary variables using Method 2 in Higham (2002, p. 263).[19]

Relationship to recursive Bayesian estimation

The Kalman filter can be considered to be one of the most simple dynamic Bayesian networks. The Kalman filter calculates estimates of the true values of measurements recursively over time using incoming measurements and a mathematical process model. Similarly, recursive Bayesian estimation calculates estimates of an unknown probability density function (PDF) recursively over time using incoming measurements and a mathematical process model.[20]

In recursive Bayesian estimation, the true state is assumed to be an unobserved Markov process, and the measurements are the observed states of a hidden Markov model (HMM).

Because of the Markov assumption, the true state is conditionally independent of all earlier states given the immediately previous state.

Similarly the measurement at the k-th timestep is dependent only upon the current state and is conditionally independent of all other states given the current state.

Using these assumptions the probability distribution over all states of the hidden Markov model can be written simply as:

However, when the Kalman filter is used to estimate the state x, the probability distribution of interest is that associated with the current states conditioned on the measurements up to the current timestep. This is achieved by marginalizing out the previous states and dividing by the probability of the measurement set.

This leads to the predict and update steps of the Kalman filter written probabilistically. The probability distribution associated with the predicted state is the sum (integral) of the products of the probability distribution associated with the transition from the (k - 1)-th timestep to the k-th and the probability distribution associated with the previous state, over all possible xk − 1.

The measurement set up to time t is

The probability distribution of the update is proportional to the product of the measurement likelihood and the predicted state.

The denominator

is a normalization term.

The remaining probability density functions are

Note that the PDF at the previous timestep is inductively assumed to be the estimated state and covariance. This is justified because, as an optimal estimator, the Kalman filter makes best use of the measurements, therefore the PDF for

given the measurements

given the measurements  is the Kalman filter estimate.

is the Kalman filter estimate.Information filter

In the information filter, or inverse covariance filter, the estimated covariance and estimated state are replaced by the information matrix and information vector respectively. These are defined as:

Similarly the predicted covariance and state have equivalent information forms, defined as:

as have the measurement covariance and measurement vector, which are defined as:

The information update now becomes a trivial sum.

The main advantage of the information filter is that N measurements can be filtered at each timestep simply by summing their information matrices and vectors.

To predict the information filter the information matrix and vector can be converted back to their state space equivalents, or alternatively the information space prediction can be used.

Note that if F and Q are time invariant these values can be cached. Note also that F and Q need to be invertible.

Fixed-lag smoother

The optimal fixed-lag smoother provides the optimal estimate of

for a given fixed-lag N using the measurements from

for a given fixed-lag N using the measurements from  to

to  . It can be derived using the previous theory via an augmented state, and the main equation of the filter is the following:

. It can be derived using the previous theory via an augmented state, and the main equation of the filter is the following:where:

is estimated via a standard Kalman filter;

is estimated via a standard Kalman filter; is the innovation produced considering the estimate of the standard Kalman filter;

is the innovation produced considering the estimate of the standard Kalman filter;- the various

with

with  are new variables, i.e. they do not appear in the standard Kalman filter;

are new variables, i.e. they do not appear in the standard Kalman filter; - the gains are computed via the following scheme:

-

- and

- where P and K are the prediction error covariance and the gains of the standard Kalman filter (i.e., Pt | t − 1).

If the estimation error covariance is defined so that

then we have that the improvement on the estimation of

is given by:

is given by:Fixed-interval smoothers

The optimal fixed-interval smoother provides the optimal estimate of

(k < n) using the measurements from a fixed interval

(k < n) using the measurements from a fixed interval  to

to  . This is also called "Kalman Smoothing". There are several smoothing algorithms in common use.

. This is also called "Kalman Smoothing". There are several smoothing algorithms in common use.Rauch–Tung–Striebel

The Rauch–Tung–Striebel (RTS) smoother[21] is an efficient two-pass algorithm for fixed interval smoothing. The main equations of the smoother are the following (assuming

):

):- forward pass: regular Kalman filter algorithm

- backward pass:

, where

, whereModified Bryson–Frazier smoother

An alternative to the RTS algorithm is the modified Bryson–Frazier (MBF) fixed interval smoother developed by Bierman.[16] This also uses a backward pass that processes data saved from the Kalman filter forward pass. The equations for the backward pass involve the recursive computation of data which are used at each observation time to compute the smoothed state and covariance.

The recursive equations are

where

is the residual covariance and

is the residual covariance and  . The smoothed state and covariance can then be found by substitution in the equations

. The smoothed state and covariance can then be found by substitution in the equationsor

.

.

An important advantage of the MBF is that it does not require finding the inverse of the covariance matrix.

Non-linear filters

The basic Kalman filter is limited to a linear assumption. More complex systems, however, can be nonlinear. The non-linearity can be associated either with the process model or with the observation model or with both.

Extended Kalman filter

Main article: Extended Kalman filterIn the extended Kalman filter (EKF), the state transition and observation models need not be linear functions of the state but may instead be non-linear functions. These functions are of differentiable type.

The function f can be used to compute the predicted state from the previous estimate and similarly the function h can be used to compute the predicted measurement from the predicted state. However, f and h cannot be applied to the covariance directly. Instead a matrix of partial derivatives (the Jacobian) is computed.

At each timestep the Jacobian is evaluated with current predicted states. These matrices can be used in the Kalman filter equations. This process essentially linearizes the non-linear function around the current estimate.

Unscented Kalman filter

When the state transition and observation models – that is, the predict and update functions f and h (see above) – are highly non-linear, the extended Kalman filter can give particularly poor performance.[22] This is because the covariance is propagated through linearization of the underlying non-linear model. The unscented Kalman filter (UKF) [22] uses a deterministic sampling technique known as the unscented transform to pick a minimal set of sample points (called sigma points) around the mean. These sigma points are then propagated through the non-linear functions, from which the mean and covariance of the estimate are then recovered. The result is a filter which more accurately captures the true mean and covariance. (This can be verified using Monte Carlo sampling or through a Taylor series expansion of the posterior statistics.) In addition, this technique removes the requirement to explicitly calculate Jacobians, which for complex functions can be a difficult task in itself (i.e., requiring complicated derivatives if done analytically or being computationally costly if done numerically).

- Predict

As with the EKF, the UKF prediction can be used independently from the UKF update, in combination with a linear (or indeed EKF) update, or vice versa.

The estimated state and covariance are augmented with the mean and covariance of the process noise.

A set of 2L+1 sigma points is derived from the augmented state and covariance where L is the dimension of the augmented state.

where

is the ith column of the matrix square root of

using the definition: square root A of matrix B satisfies

-

.

.

The matrix square root should be calculated using numerically efficient and stable methods such as the Cholesky decomposition.

The sigma points are propagated through the transition function f.

where

. The weighted sigma points are recombined to produce the predicted state and covariance.

. The weighted sigma points are recombined to produce the predicted state and covariance.where the weights for the state and covariance are given by:

α and κ control the spread of the sigma points. β is related to the distribution of x. Normal values are α = 10 − 3, κ = 0 and β = 2. If the true distribution of x is Gaussian, β = 2 is optimal.[23]

- Update

The predicted state and covariance are augmented as before, except now with the mean and covariance of the measurement noise.

As before, a set of 2L + 1 sigma points is derived from the augmented state and covariance where L is the dimension of the augmented state.

Alternatively if the UKF prediction has been used the sigma points themselves can be augmented along the following lines

where

The sigma points are projected through the observation function h.

The weighted sigma points are recombined to produce the predicted measurement and predicted measurement covariance.

The state-measurement cross-covariance matrix,

is used to compute the UKF Kalman gain.

As with the Kalman filter, the updated state is the predicted state plus the innovation weighted by the Kalman gain,

And the updated covariance is the predicted covariance, minus the predicted measurement covariance, weighted by the Kalman gain.

Kalman–Bucy filter

The Kalman–Bucy filter (named after Richard Snowden Bucy) is a continuous time version of the Kalman filter.[24][25]

It is based on the state space model

where the covariances of the noise terms

and

and  are given by

are given by  and

and  , respectively.

, respectively.The filter consists of two differential equations, one for the state estimate and one for the covariance:

where the Kalman gain is given by

Note that in this expression for

the covariance of the observation noise

the covariance of the observation noise  represents at the same time the covariance of the prediction error (or innovation)

represents at the same time the covariance of the prediction error (or innovation)  ; these covariances are equal only in the case of continuous time.[26]

; these covariances are equal only in the case of continuous time.[26]The distinction between the prediction and update steps of discrete-time Kalman filtering does not exist in continuous time.

The second differential equation, for the covariance, is an example of a Riccati equation.

Hybrid Kalman filter

Most physical systems are represented as continuous-time models while discrete-time measurements are frequently taken for state estimation via a digital processor. Therefore, the system model and measurement model are given by

where

.

.- Initialize

![\hat{\mathbf{x}}_{0|0}=E\bigl[\mathbf{x}(t_0)\bigr], \mathbf{P}_{0|0}=Var\bigl[\mathbf{x}(t_0)\bigr]](f/40fe4d2b2c67d47b077805344c1e45c7.png)

- Predict

The prediction equations are derived from those of continuous-time Kalman filter without update from measurements, i.e.,

. The predicted state and covariance are calculated respectively by solving a set of differential equations with the initial value equal to the estimate at the previous step.

. The predicted state and covariance are calculated respectively by solving a set of differential equations with the initial value equal to the estimate at the previous step.- Update

The update equations are identical to those of discrete-time Kalman filter.

Kalman filter variants for the recovery of sparse signals

Recently the traditional Kalman filter has been employed for the recovery of sparse, possibly dynamic, signals from noisy observations. Both works [27] and [28] utilize notions from the theory of compressed sensing/sampling, such as the restricted isometry property and related probabilistic recovery arguments, for sequentially estimating the sparse state in intrinsically low-dimensional systems.

Applications

- Attitude and Heading Reference Systems

- Autopilot

- Battery state of charge (SoC) estimation [1][2]

- Brain-computer interface

- Chaotic signals

- Dynamic positioning

- Economics, in particular macroeconomics, time series, and econometrics

- Inertial guidance system

- Orbit Determination

- Radar tracker

- Satellite navigation systems

- Seismology [3]

- Simultaneous localization and mapping

- Speech enhancement

- Weather forecasting

- Navigation system

- 3D modeling

See also

- Alpha beta filter

- Covariance intersection

- Data assimilation

- Ensemble Kalman filter

- Extended Kalman filter

- Invariant extended Kalman filter

- Fast Kalman filter

- Compare with: Wiener filter, and the multimodal Particle filter estimator.

- Filtering problem (stochastic processes)

- Kernel adaptive filter

- Non-linear filter

- Predictor corrector

- Recursive least squares

- Sliding mode control – describes a sliding mode observer that has similar noise performance to the Kalman filter

- Separation principle

- Zakai equation

- Stochastic differential equations

- Volterra series

References

- ^ Steffen L. Lauritzen. "Time series analysis in 1880. A discussion of contributions made by T.N. Thiele". International Statistical Review 49, 1981, 319-333.

- ^ Steffen L. Lauritzen, Thiele: Pioneer in Statistics, Oxford University Press, 2002. ISBN 0-19-850972-3.

- ^ Stratonovich, R.L. (1959). Optimum nonlinear systems which bring about a separation of a signal with constant parameters from noise. Radiofizika, 2:6, pp. 892–901.

- ^ Stratonovich, R.L. (1959). On the theory of optimal non-linear filtering of random functions. Theory of Probability and its Applications, 4, pp. 223–225.

- ^ Stratonovich, R.L. (1960) Application of the Markov processes theory to optimal filtering. Radio Engineering and Electronic Physics, 5:11, pp. 1–19.

- ^ Stratonovich, R.L. (1960). Conditional Markov Processes. Theory of Probability and its Applications, 5, pp. 156–178.

- ^ Ingvar Strid; Karl Walentin (April 2009). "Block Kalman Filtering for Large-Scale DSGE Models". Computational Economics (Springer) 33 (3): 277–304. doi:10.1007/s10614-008-9160-4. http://www.riksbank.com/upload/Dokument_riksbank/Kat_publicerat/WorkingPapers/2008/wp224ny.pdf

- ^ Martin Møller Andreasen (2008). "Non-linear DSGE Models, The Central Difference Kalman Filter, and The Mean Shifted Particle Filter". ftp://ftp.econ.au.dk/creates/rp/08/rp08_33.pdf

- ^ Roweis, S. and Ghahramani, Z., A unifying review of linear Gaussian models, Neural Comput. Vol. 11, No. 2, (February 1999), pp. 305–345.

- ^ Hamilton, J. (1994), Time Series Analysis, Princeton University Press. Chapter 13, 'The Kalman Filter'.

- ^ "Murali Rajamani, PhD Thesis" Data-based Techniques to Improve State Estimation in Model Predictive Control, University of Wisconsin-Madison, October 2007

- ^ Murali R. Rajamani and James B. Rawlings. Estimation of the disturbance structure from data using semidefinite programming and optimal weighting. Automatica, 45:142-148, 2009.

- ^ http://jbrwww.che.wisc.edu/software/als/

- ^ Anderson, Brian D. O.; Moore, John B. (1979). Optimal Filtering. New York: Prentice Hall Inc. pp. 129–133. ISBN 0136381227.

- ^ a b Thornton, Catherine L. (15 October 1976). Triangular Covariance Factorizations for Kalman Filtering. (PhD thesis). NASA. NASA Technical Memorandum 33-798. http://ntrs.nasa.gov/archive/nasa/casi.ntrs.nasa.gov/19770005172_1977005172.pdf

- ^ a b c Bierman, G.J. (1977). Factorization Methods for Discrete Sequential Estimation. Academic Press

- ^ a b Bar-Shalom, Yaakov; Li, X. Rong; Kirubarajan, Thiagalingam (July 2001). Estimation with Applications to Tracking and Navigation. New York: John Wiley & Sons. pp. 308–317. ISBN 9780471416555.

- ^ Golub, Gene H.; Van Loan, Charles F. (1996). Matrix Computations. Johns Hopkins Studies in the Mathematical Sciences (Third ed.). Baltimore, Maryland: Johns Hopkins University. p. 139. ISBN 9780801854149.

- ^ Higham, Nicholas J. (2002). Accuracy and Stability of Numerical Algorithms (Second ed.). Philadelphia, PA: Society for Industrial and Applied Mathematics. pp. 680. ISBN 9780898715217.

- ^ C. Johan Masreliez, R D Martin (1977); Robust Bayesian estimation for the linear model and robustifying the Kalman filter, IEEE Trans. Automatic Control

- ^ Rauch, H.E.; Tung, F.; Striebel, C. T. (August 1965). "Maximum likelihood estimates of linear dynamic systems". AIAA J 3 (8): 1445–1450. doi:10.2514/3.3166. http://pdf.aiaa.org/getfile.cfm?urlX=7%3CWIG7D%2FQKU%3E6B5%3AKF2Z%5CD%3A%2B82%2A%40%24%5E%3F%40%20%0A&urla=%25%2ARL%2F%220L%20%0A&urlb=%21%2A%20%20%20%0A&urlc=%21%2A0%20%20%0A&urld=%21%2A0%20%20%0A&urle=%27%2BB%2C%27%22%20%22KT0%20%20%0A

- ^ a b Julier, S.J.; Uhlmann, J.K. (1997). "A new extension of the Kalman filter to nonlinear systems". Int. Symp. Aerospace/Defense Sensing, Simul. and Controls 3. http://www.cs.unc.edu/~welch/kalman/media/pdf/Julier1997_SPIE_KF.pdf. Retrieved 2008-05-03.

- ^ Wan, Eric A. and van der Merwe, Rudolph "The Unscented Kalman Filter for Nonlinear Estimation"

- ^ Bucy, R.S. and Joseph, P.D., Filtering for Stochastic Processes with Applications to Guidance, John Wiley & Sons, 1968; 2nd Edition, AMS Chelsea Publ., 2005. ISBN 0-8218-3782-6

- ^ Jazwinski, Andrew H., Stochastic processes and filtering theory, Academic Press, New York, 1970. ISBN 0-12-381550-9

- ^ Kailath, Thomas, "An innovation approach to least-squares estimation Part I: Linear filtering in additive white noise", IEEE Transactions on Automatic Control, 13(6), 646-655, 1968

- ^ Carmi, A. and Gurfil, P. and Kanevsky, D. , "Methods for sparse signal recovery using Kalman filtering with embedded pseudo-measurement norms and quasi-norms", IEEE Transactions on Signal Processing, 58(4), 2405–2409, 2010

- ^ Vaswani, N. , "Kalman Filtered Compressed Sensing", 15th International Conference on Image Processing, 2008

Further reading

- Gelb, A. (1974). Applied Optimal Estimation. MIT Press.

- Kalman, R.E. (1960). "A new approach to linear filtering and prediction problems". Journal of Basic Engineering 82 (1): 35–45. http://www.elo.utfsm.cl/~ipd481/Papers%20varios/kalman1960.pdf. Retrieved 2008-05-03.

- Kalman, R.E.; Bucy, R.S. (1961). New Results in Linear Filtering and Prediction Theory. http://www.dtic.mil/srch/doc?collection=t2&id=ADD518892. Retrieved 2008-05-03.

- Harvey, A.C. (1990). Forecasting, Structural Time Series Models and the Kalman Filter. Cambridge University Press.

- Roweis, S.; Ghahramani, Z. (1999). "A Unifying Review of Linear Gaussian Models". Neural Computation 11 (2): 305–345. doi:10.1162/089976699300016674. PMID 9950734.

- Simon, D. (2006). Optimal State Estimation: Kalman, H Infinity, and Nonlinear Approaches. Wiley-Interscience. http://academic.csuohio.edu/simond/estimation/.

- Stengel, R.F. (1994). Optimal Control and Estimation. Dover Publications. ISBN 0-486-68200-5. http://www.princeton.edu/~stengel/OptConEst.html.

- Warwick, K. (1987). "Optimal observers for ARMA models". International Journal of Control 46 (5): 1493–1503. doi:10.1080/00207178708933989. http://www.informaworld.com/index/779885789.pdf. Retrieved 2008-05-03.

- Bierman, G.J. (1977). Factorization Methods for Discrete Sequential Estimation. 128. Mineola, N.Y.: Dover Publications. ISBN 9780486449814.

- Bozic, S.M. (1994). Digital and Kalman filtering. Butterworth-Heinemann.

- Haykin, S. (2002). Adaptive Filter Theory. Prentice Hall.

- Liu, W.; Principe, J.C. and Haykin, S. (2010). Kernel Adaptive Filtering: A Comprehensive Introduction. John Wiley.

- Manolakis, D.G. (1999). Statistical and Adaptive signal processing. Artech House.

- Welch, Greg; Bishop, Gary (1997). SCAAT: Incremental Tracking with Incomplete Information. ACM Press/Addison-Wesley Publishing Co. pp. 333–344. doi:10.1145/258734.258876. ISBN 0-89791-896-7. http://www.cs.unc.edu/~welch/media/pdf/scaat.pdf.

- Jazwinski, Andrew H. (1970). Stochastic Processes and Filtering. Mathematics in Science and Engineering. New York: Academic Press. pp. 376. ISBN 0123815509.

- Maybeck, Peter S. (1979). Stochastic Models, Estimation, and Control. Mathematics in Science and Engineering. 141-1. New York: Academic Press. pp. 423. ISBN 0124807011.

- Moriya, N. (2011). Primer to Kalman Filtering: A Physicist Perspective. New York: Nova Science Publishers, Inc. ISBN 9781616683115.

- Chui, Charles K.; Chen, Guanrong (2009). Kalman Filtering with Real-Time Applications. Springer Series in Information Sciences. 17 (4th ed.). New York: Springer. pp. 229. ISBN 9783540878483.

- Spivey, Ben; Hedengren, J. D. and Edgar, T. F. (2010). "Constrained Nonlinear Estimation for Industrial Process Fouling". Industrial & Engineering Chemistry Research 49 (17): 7824–7831. doi:10.1021/ie9018116. http://pubs.acs.org/doi/abs/10.1021/ie9018116.

- Thomas Kailath, Ali H. Sayed, and Babak Hassibi, Linear Estimation, Prentice-Hall, NJ, 2000, ISBN 978-0-13-022464-4.

- Ali H. Sayed, Adaptive Filters, Wiley, NJ, 2008, ISBN 978-0-470-25388-5.

External links

- A New Approach to Linear Filtering and Prediction Problems, by R. E. Kalman, 1960

- Kalman–Bucy Filter, a good derivation of the Kalman–Bucy Filter

- MIT Video Lecture on the Kalman filter

- An Introduction to the Kalman Filter, SIGGRAPH 2001 Course, Greg Welch and Gary Bishop

- Kalman filtering chapter from Stochastic Models, Estimation, and Control, vol. 1, by Peter S. Maybeck

- Kalman Filter webpage, with lots of links

- Kalman Filtering

- Kalman Filters, thorough introduction to several types, together with applications to Robot Localization

- Kalman filters used in Weather models, SIAM News, Volume 36, Number 8, October 2003.

- Critical Evaluation of Extended Kalman Filtering and Moving-Horizon Estimation, Ind. Eng. Chem. Res., 44 (8), 2451–2460, 2005.

- Source code for the propeller microprocessor: Well documented source code written for the Parallax propeller processor.

- Gerald J. Bierman's Estimation Subroutine Library: Corresponds to the code in the research monograph "Factorization Methods for Discrete Sequential Estimation" originally published by Academic Press in 1977. Republished by Dover

- Matlab Toolbox of Kalman Filtering applied to Simultaneous Localization and Mapping: Vehicle moving in 1D, 2D and 3D

- Derivation of a 6D EKF solution to Simultaneous Localization and Mapping (In old version PDF). See also the tutorial on implementing a Kalman Filter with the MRPT C++ libraries.

- The Kalman Filter Explained A very simple tutorial.

- The Kalman Filter in Reproducing Kernel Hilbert Spaces A comprehensive introduction.

- Matlab code to estimate Cox–Ingersoll–Ross interest rate model with Kalman Filter: Corresponds to the paper "estimating and testing exponential-affine term structure models by kalman filter" published by Review of Quantitative Finance and Accounting in 1999.

- Extended Kalman Filters explained in the context of Simulation, Estimation, Control, and Optimization

Categories:- Control theory

- Non-linear filters

- Linear filters

- Signal estimation

- Stochastic differential equations

- Robot control

- Markov models

- Hungarian inventions

Wikimedia Foundation. 2010.

![\textrm{E}[\textbf{x}_k - \hat{\textbf{x}}_{k|k}] = \textrm{E}[\textbf{x}_k - \hat{\textbf{x}}_{k|k-1}] = 0](2/c9203d41067d91d378d6856103fedfd2.png)

![\textrm{E}[\tilde{\textbf{y}}_k] = 0](a/b5a54dc85106b58adbb9909c0b3fb1a3.png)

![\textbf{R} = \textrm{E}[\textbf{v}_k \textbf{v}_k^{\text{T}}] = \begin{bmatrix} \sigma_z^2 \end{bmatrix}](f/d3f4efc362f41c6e1d79f2933c54eb7f.png)

![\textbf{M}_{k} =

[\textbf{F}_{k}^{-1}]^{\text{T}} \textbf{Y}_{k-1|k-1} \textbf{F}_{k}^{-1}](5/d554b7423105ec10f132c37fc770d877.png)

![\textbf{C}_{k} =

\textbf{M}_{k} [\textbf{M}_{k}+\textbf{Q}_{k}^{-1}]^{-1}](f/adfa97ba0b29a74339ab5e8518af11a5.png)

![\hat{\textbf{y}}_{k|k-1} =

\textbf{L}_{k} [\textbf{F}_{k}^{-1}]^{\text{T}}\hat{\textbf{y}}_{k-1|k-1}](0/1b0bbe1e3c86059ccb560f316a3d4f7f.png)

![K^{(i)} =

P^{(i)} H^{T}

\left[

H P H^{T} + R

\right]^{-1}](c/84c9732d7cc69960772a191e4d09eaed.png)

![P^{(i)} =

P

\left[

\left[

F - K H

\right]^{T}

\right]^{i}](d/24d65d3a8c4f87b6d2902be6ae846da9.png)

![P_{i} :=

E

\left[

\left(

\textbf{x}_{t-i} - \hat{\textbf{x}}_{t-i|t}

\right)^{*}

\left(

\textbf{x}_{t-i} - \hat{\textbf{x}}_{t-i|t}

\right)

|

z_{1} \ldots z_{t}

\right],](b/71baadf562e6608af0b3305bb4081f27.png)

![P - P_{i} =

\sum_{j = 0}^{i}

\left[

P^{(j)} H^{T}

\left[

H P H^{T} + R

\right]^{-1}

H \left( P^{(i)} \right)^{T}

\right]](0/1607f4f21976ef2cac996926f36aad95.png)

![\textbf{x}_{k-1|k-1}^{a} = [ \hat{\textbf{x}}_{k-1|k-1}^{T} \quad E[\textbf{w}_{k}^{T}] \ ]^{T}](d/f4d4df261c8627da3d7f5aa45296b5c7.png)

![\textbf{P}_{k|k-1} = \sum_{i=0}^{2L} W_{c}^{i}\ [\chi_{k|k-1}^{i} - \hat{\textbf{x}}_{k|k-1}] [\chi_{k|k-1}^{i} - \hat{\textbf{x}}_{k|k-1}]^{T}](2/a326e08e696a35078e91f0703ebed17a.png)

![\textbf{x}_{k|k-1}^{a} = [ \hat{\textbf{x}}_{k|k-1}^{T} \quad E[\textbf{v}_{k}^{T}] \ ]^{T}](e/e4eac768b9062a82ff9a290308698220.png)

![\chi_{k|k-1} := [ \chi_{k|k-1}^T \quad E[\textbf{v}_{k}^{T}] \ ]^{T} \pm \sqrt{ (L + \lambda) \textbf{R}_{k}^{a} }](5/5d55f9ee8e1fb9a2ad270d6119a6bee7.png)

![\textbf{P}_{z_{k}z_{k}} = \sum_{i=0}^{2L} W_{c}^{i}\ [\gamma_{k}^{i} - \hat{\textbf{z}}_{k}] [\gamma_{k}^{i} - \hat{\textbf{z}}_{k}]^{T}](3/6d3da795ea81bc12de85506725726b27.png)

![\textbf{P}_{x_{k}z_{k}} = \sum_{i=0}^{2L} W_{c}^{i}\ [\chi_{k|k-1}^{i} - \hat{\textbf{x}}_{k|k-1}] [\gamma_{k}^{i} - \hat{\textbf{z}}_{k}]^{T}](b/adb7df65346664015ca5683b1972acaa.png)