- CMA-ES

-

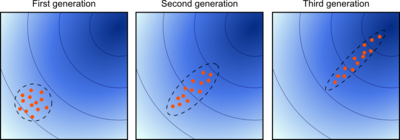

CMA-ES stands for Covariance Matrix Adaptation Evolution Strategy. Evolution strategies (ES) are stochastic, derivative-free methods for numerical optimization of non-linear or non-convex continuous optimization problems. They belong to the class of evolutionary algorithms and evolutionary computation. An evolutionary algorithm is broadly based on the principle of biological evolution, namely the repeated interplay of variation (via mutation and recombination) and selection: in each generation (iteration) new individuals (candidate solutions, denoted as x) are generated by variation, usually in a stochastic way, and then some individuals are selected for the next generation based on their fitness or objective function value f(x). Like this, over the generation sequence, individuals with better and better f-values are generated.

In an evolution strategy, new candidate solutions are sampled according to a multivariate normal distribution in the

. Pairwise dependencies between the variables in this distribution are described by a covariance matrix. The covariance matrix adaptation (CMA) is a method to update the covariance matrix of this distribution. This is particularly useful, if the function f is ill-conditioned.

. Pairwise dependencies between the variables in this distribution are described by a covariance matrix. The covariance matrix adaptation (CMA) is a method to update the covariance matrix of this distribution. This is particularly useful, if the function f is ill-conditioned.Adaptation of the covariance matrix amounts to learning a second order model of the underlying objective function similar to the approximation of the inverse Hessian matrix in the Quasi-Newton method in classical optimization. In contrast to most classical methods, fewer assumptions on the nature of the underlying objective function are made. Only the ranking between candidate solutions is exploited for learning the sample distribution and neither derivatives nor even the function values themselves are required by the method.

Contents

Principles

Two main principles for the adaptation of parameters of the search distribution are exploited in the CMA-ES algorithm.

First, a maximum-likelihood principle, based on the idea to increase the probability of successful candidate solutions and search steps. The mean of the distribution is updated such that the likelihood of previously successful candidate solutions is maximized. The covariance matrix of the distribution is updated (incrementally) such that the likelihood of previously successful search steps is increased. Both updates can be interpreted as a natural gradient descent. Also, in consequence, the CMA conducts an iterated principal components analysis of successful search steps while retaining all principal axes. Estimation of distribution algorithms and the Cross-Entropy Method are based on very similar ideas, but estimate (non-incrementally) the covariance matrix by maximizing the likelihood of successful solution points instead of successful search steps.

Second, two paths of the time evolution of the distribution mean of the strategy are recorded, called search or evolution paths. These paths contain significant information about the correlation between consecutive steps. Specifically, if consecutive steps are taken in a similar direction, the evolution paths become long. The evolution paths are exploited in two ways. One path is used for the covariance matrix adaptation procedure in place of single successful search steps and facilitates a possibly much faster variance increase of favorable directions. The other path is used to conduct an additional step-size control. This step-size control aims to make consecutive movements of the distribution mean orthogonal in expectation. The step-size control effectively prevents premature convergence yet allowing fast convergence to an optimum.

Algorithm

In the following the most commonly used (μ/μw, λ)-CMA-ES is outlined, where in each iteration step a weighted combination of the μ best out of λ new candidate solutions is used to update the distribution parameters. The main loop consists of three main parts: 1) sampling of new solutions, 2) re-ordering of the sampled solutions based on their fitness, 3) update of the internal state variables based on the re-ordered samples. A pseudocode of the algorithm looks as follows.

set λ // number of samples per iteration, at least two, generally > 4 initialize m, σ, C = I, pσ = 0, pc = 0 // initialize state variables while not terminate // iterate for i in {1...λ} // sample λ new solutions and evaluate them xi = sample_multivariate_normal(mean=m, covariance_matrix=σ2C) fi = fitness(xi) x1...λ ← xs(1)...s(λ) with s(i) = argsort(f1...λ, i) // sort solutions m' = m // we need later m − m' and xi − m' m ← update_m(x1,..., xλ) // move mean to better solutions pσ ← update_ps(pσ, σ − 1C − 1 / 2(m − m')) // update isotropic evolution path pc ← update_pc(pc, σ − 1(m − m'), | | pσ | | ) // update anisotropic evolution path C ← update_C(C, pc, (x1 − m') / σ,..., (xλ − m') / σ) // update covariance matrix σ ← update_sigma(σ, | | pσ | | ) // update step-size using isotropic path length return m or x1The order of the five update assignments is relevant. In the following, the update equations for the five state variables is specified.

Given are the search space dimension n and the iteration step k. The five state variables are

, the distribution mean and current favorite solution to the optimization problem,

, the distribution mean and current favorite solution to the optimization problem,

- σk > 0, the step-size,

- Ck, a symmetric and positive definite

covariance matrix with C0 = I and

covariance matrix with C0 = I and

, two evolution paths, initially set to the zero vector.

, two evolution paths, initially set to the zero vector.

The iteration starts with sampling λ > 1 candidate solutions

from a multivariate normal distribution

from a multivariate normal distribution  , i.e. for i = 1,...,λ

, i.e. for i = 1,...,λThe second line suggests the interpretation as perturbation (mutation) of the current favorite solution vector mk (the distribution mean vector). The candidate solutions xi are evaluated on the objective function

to be minimized. Denoting the f-sorted candidate solutions as

to be minimized. Denoting the f-sorted candidate solutions asthe new mean value is computed as

where the positive (recombination) weights

sum to one. Typically,

sum to one. Typically,  and the weights are chosen such that

and the weights are chosen such that  . The only feedback used from the objective function here and in the following is an ordering of the sampled candidate solutions due to the indices i:λ.

. The only feedback used from the objective function here and in the following is an ordering of the sampled candidate solutions due to the indices i:λ.The step-size σk is updated using cumulative step-size adaptation (CSA), sometimes also denoted as path length control. The evolution path (or search path) pσ is updated first.

where

is the backward time horizon for the evolution path pσ and larger than one,

is the backward time horizon for the evolution path pσ and larger than one,

is the variance effective selection mass and

is the variance effective selection mass and  by definition of wi,

by definition of wi,

is the unique symmetric square root of the inverse of Ck, and

is the unique symmetric square root of the inverse of Ck, and

- dσ is the damping parameter usually close to one. For

or cσ = 0 the step-size remains unchanged.

or cσ = 0 the step-size remains unchanged.

The step-size σk is increased if and only if

is larger than the expected value

is larger than the expected valueand decreased if it is smaller. For this reason, the step-size update tends to make consecutive steps

-conjugate, in that after the adaptation has been successful

-conjugate, in that after the adaptation has been successful  .

.Finally, the covariance matrix is updated, where again the respective evolution path is updated first.

where T denotes the transpose and

is the backward time horizon for the evolution path pc and larger than one,

is the backward time horizon for the evolution path pc and larger than one,

and the indicator function

and the indicator function ![\mathbf{1}_{[0,\alpha\sqrt{n}]}(\|p_\sigma\|)](c/00c88295ff94dd8bbc87e8ca1f70da2b.png) evaluates to one iff

evaluates to one iff ![\|p_\sigma\|\in[0,\alpha\sqrt{n}]](2/0b29a7de4a8794bd047435d420c739e8.png) or, in other words,

or, in other words,  , which is usually the case,

, which is usually the case,

![c_s = (1 - \mathbf{1}_{[0,\alpha\sqrt{n}]}(\|p_\sigma\|)) \,c_1 c_c (2-c_c)](4/a34bc4e0409fb41b1368340d2cadaa88.png) makes partly up for the small variance loss in case the indicator is zero,

makes partly up for the small variance loss in case the indicator is zero,

is the learning rate for the rank-one update of the covariance matrix and

is the learning rate for the rank-one update of the covariance matrix and

is the learning rate for the rank-μ update of the covariance matrix and must not exceed 1 − c1.

is the learning rate for the rank-μ update of the covariance matrix and must not exceed 1 − c1.

The covariance matrix update tends to increase the likelihood for pc and for (xi:λ − mk) / σk to be sampled from

. This completes the iteration step.

. This completes the iteration step.The number of candidate samples per iteration, λ, is not determined a priori and can vary in a wide range. Smaller values, for example λ = 10, lead to more local search behavior. Larger values, for example λ = 10n with default value

, render the search more global. Sometimes the algorithm is repeatedly restarted with increasing λ by a factor of two for each restart.[1] Besides of setting λ (or possibly μ instead, if for example λ is predetermined by the number of available processors), the above introduced parameters are not specific to the given objective function and therefore not meant to be modified by the user.

, render the search more global. Sometimes the algorithm is repeatedly restarted with increasing λ by a factor of two for each restart.[1] Besides of setting λ (or possibly μ instead, if for example λ is predetermined by the number of available processors), the above introduced parameters are not specific to the given objective function and therefore not meant to be modified by the user.Example code in Matlab/Octave

function xmin=purecmaes % (mu/mu_w, lambda)-CMA-ES % -------------------- Initialization -------------------------------- % User defined input parameters (need to be edited) strfitnessfct = 'frosenbrock'; % name of objective/fitness function N = 20; % number of objective variables/problem dimension xmean = rand(N,1); % objective variables initial point sigma = 0.5; % coordinate wise standard deviation (step size) stopfitness = 1e-10; % stop if fitness < stopfitness (minimization) stopeval = 1e3*N^2; % stop after stopeval number of function evaluations % Strategy parameter setting: Selection lambda = 4+floor(3*log(N)); % population size, offspring number mu = lambda/2; % number of parents/points for recombination weights = log(mu+1/2)-log(1:mu)'; % muXone array for weighted recombination mu = floor(mu); weights = weights/sum(weights); % normalize recombination weights array mueff=sum(weights)^2/sum(weights.^2); % variance-effectiveness of sum w_i x_i % Strategy parameter setting: Adaptation cc = (4+mueff/N) / (N+4 + 2*mueff/N); % time constant for cumulation for C cs = (mueff+2) / (N+mueff+5); % t-const for cumulation for sigma control c1 = 2 / ((N+1.3)^2+mueff); % learning rate for rank-one update of C cmu = 2 * (mueff-2+1/mueff) / ((N+2)^2+mueff); % and for rank-mu update damps = 1 + 2*max(0, sqrt((mueff-1)/(N+1))-1) + cs; % damping for sigma % usually close to 1 % Initialize dynamic (internal) strategy parameters and constants pc = zeros(N,1); ps = zeros(N,1); % evolution paths for C and sigma B = eye(N,N); % B defines the coordinate system D = ones(N,1); % diagonal D defines the scaling C = B * diag(D.^2) * B'; % covariance matrix C invsqrtC = B * diag(D.^-1) * B'; % C^-1/2 eigeneval = 0; % track update of B and D chiN=N^0.5*(1-1/(4*N)+1/(21*N^2)); % expectation of % ||N(0,I)|| == norm(randn(N,1)) % -------------------- Generation Loop -------------------------------- counteval = 0; % the next 40 lines contain the 20 lines of interesting code while counteval < stopeval % Generate and evaluate lambda offspring for k=1:lambda, arx(:,k) = xmean + sigma * B * (D .* randn(N,1)); % m + sig * Normal(0,C) arfitness(k) = feval(strfitnessfct, arx(:,k)); % objective function call counteval = counteval+1; end % Sort by fitness and compute weighted mean into xmean [arfitness, arindex] = sort(arfitness); % minimization xold = xmean; xmean = arx(:,arindex(1:mu))*weights; % recombination, new mean value % Cumulation: Update evolution paths ps = (1-cs)*ps ... + sqrt(cs*(2-cs)*mueff) * invsqrtC * (xmean-xold) / sigma; hsig = norm(ps)/sqrt(1-(1-cs)^(2*counteval/lambda))/chiN < 1.4 + 2/(N+1); pc = (1-cc)*pc ... + hsig * sqrt(cc*(2-cc)*mueff) * (xmean-xold) / sigma; % Adapt covariance matrix C artmp = (1/sigma) * (arx(:,arindex(1:mu))-repmat(xold,1,mu)); C = (1-c1-cmu) * C ... % regard old matrix + c1 * (pc*pc' ... % plus rank one update + (1-hsig) * cc*(2-cc) * C) ... % minor correction if hsig==0 + cmu * artmp * diag(weights) * artmp'; % plus rank mu update % Adapt step size sigma sigma = sigma * exp((cs/damps)*(norm(ps)/chiN - 1)); % Decomposition of C into B*diag(D.^2)*B' (diagonalization) if counteval - eigeneval > lambda/(c1+cmu)/N/10 % to achieve O(N^2) eigeneval = counteval; C = triu(C) + triu(C,1)'; % enforce symmetry [B,D] = eig(C); % eigen decomposition, B==normalized eigenvectors D = sqrt(diag(D)); % D is a vector of standard deviations now invsqrtC = B * diag(D.^-1) * B'; end % Break, if fitness is good enough or condition exceeds 1e14, better termination methods are advisable if arfitness(1) <= stopfitness || max(D) > 1e7 * min(D) break; end end % while, end generation loop xmin = arx(:, arindex(1)); % Return best point of last iteration. % Notice that xmean is expected to be even % better. % --------------------------------------------------------------- function f=frosenbrock(x) if size(x,1) < 2 error('dimension must be greater one'); end f = 100*sum((x(1:end-1).^2 - x(2:end)).^2) + sum((x(1:end-1)-1).^2);

Theoretical Foundations

Given the distribution parameters—mean, variances and covariances—the normal probability distribution for sampling new candidate solutions is the maximum entropy probability distribution over

, that is, the sample distribution with the minimal amount of prior information built into the distribution. More considerations on the update equations of CMA-ES are made in the following.

, that is, the sample distribution with the minimal amount of prior information built into the distribution. More considerations on the update equations of CMA-ES are made in the following.Variable Metric

The CMA-ES implements a stochastic variable-metric method. In the very particular case of a convex-quadratic objective function

the covariance matrix Ck adapts to the inverse of the Hessian matrix H, up to a scalar factor and small random fluctuations. More general, also on the function

, where g is strictly increasing and therefore order preserving and f is convex-quadratic, the covariance matrix Ck adapts to H − 1, up to a scalar factor and small random fluctuations.

, where g is strictly increasing and therefore order preserving and f is convex-quadratic, the covariance matrix Ck adapts to H − 1, up to a scalar factor and small random fluctuations.Maximum-Likelihood Updates

The update equations for mean and covariance matrix maximize a likelihood while resembling an expectation-maximization algorithm. The update of the mean vector m maximizes a log-likelihood, such that

where

denotes the log-likelihood of x from a multivariate normal distribution with mean m and any positive definite covariance matrix C. To see that mk + 1 is independent of C remark first that this is the case for any diagonal matrix C, because the coordinate-wise maximizer is independent of a scaling factor. Then, rotation of the data points or choosing C non-diagonal are equivalent.

The rank-μ update of the covariance matrix, that is, the right most summand in the update equation of Ck, maximizes a log-likelihood in that

for

(otherwise C is singular, but substantially the same result holds for μ < n). Here,

(otherwise C is singular, but substantially the same result holds for μ < n). Here,  denotes the likelihood of x from a multivariate normal distribution with zero mean and covariance matrix C. Therefore, for c1 = 0 and cμ = 1, Ck + 1 is the above maximum-likelihood estimator. See estimation of covariance matrices for details on the derivation.

denotes the likelihood of x from a multivariate normal distribution with zero mean and covariance matrix C. Therefore, for c1 = 0 and cμ = 1, Ck + 1 is the above maximum-likelihood estimator. See estimation of covariance matrices for details on the derivation.Natural Gradient Descent in the Space of Sample Distributions

Akimoto et al.[2] recently found that the update of the distribution parameters resembles the descend in direction of a sampled natural gradient of the expected objective function value E f (x) (to be minimized), where the expectation is taken under the sample distribution. With the parameter setting of cσ = 0 and c1 = 0, i.e. without step-size control and rank-one update, CMA-ES can thus be viewed as an instantiation of Natural Evolution Strategies (NES).[2][3] The natural gradient is independent of the parameterization of the distribution. Taken with respect to the parameters θ of the sample distribution p, the gradient of E f (x) can be expressed as

where p(x) = p(x | θ) depends on the parameter vector θ, the so-called score function,

, indicates the relative sensitivity of p w.r.t. θ, and the expectation is taken with respect to the distribution p. The natural gradient of E f (x), complying with the Fisher information metric (an informational distance measure between probability distributions and the curvature of the relative entropy), now reads

, indicates the relative sensitivity of p w.r.t. θ, and the expectation is taken with respect to the distribution p. The natural gradient of E f (x), complying with the Fisher information metric (an informational distance measure between probability distributions and the curvature of the relative entropy), now readswhere the Fisher information matrix Fθ is the expectation of the Hessian of -lnp and renders the expression independent of the chosen parameterization. Combining the previous equalities we get

A Monte Carlo approximation of the latter expectation takes the average over λ samples from p

where the notation i:λ from above is used and therefore wi are monotonously decreasing in i. We might use, for a more robust approximation, rather wi as defined in the CMA-ES and zero for i > μ and let

such that p(. | θ) is the density of the multivariate normal distribution

. Then, we have an explicit expression for

. Then, we have an explicit expression forand for

and, after some calculations, the updates in the CMA-ES turn out as[2]

and

where mat forms the proper matrix from the respective natural gradient sub-vector. That means, setting c1 = cσ = 0, the CMA-ES updates descend in direction of the approximation

of the natural gradient while using different step-sizes (learning rates) for the orthogonal parameters m and C respectively.

of the natural gradient while using different step-sizes (learning rates) for the orthogonal parameters m and C respectively.Stationarity or Unbiasedness

It is comparatively easy to see that the update equations of CMA-ES satisfy some stationarity conditions, in that they are essentially unbiased. Under neutral selection, where

, we find that

, we find thatand under some mild additional assumptions on the initial conditions

and with an additional minor correction in the covariance matrix update for the case where the indicator function evaluates to zero, we find

Invariance

Invariance properties imply uniform performance on a class of objective functions. They have been argued to be an advantage, because they allow to generalize and predict the behavior of the algorithm and therefore strengthen the meaning of empirical results obtained on single functions. The following invariance properties have been established for CMA-ES.

- Invariance under order-preserving transformations of the objective function value f, in that for any

the behavior is identical on

the behavior is identical on  for all strictly increasing

for all strictly increasing  . This invariance is easy to verify, because only the f-ranking is used in the algorithm, which is invariant under the choice of g.

. This invariance is easy to verify, because only the f-ranking is used in the algorithm, which is invariant under the choice of g.

- Scale-invariance, in that for any

the behavior is independent of α > 0 for the objective function

the behavior is independent of α > 0 for the objective function  given

given  and

and  .

.

- Invariance under rotation of the search space in that for any

and any

and any  the behavior on

the behavior on  is independent of the orthogonal matrix R, given m0 = R − 1z. More general, the algorithm is also invariant under general linear transformations R when additionally the initial covariance matrix is chosen as

is independent of the orthogonal matrix R, given m0 = R − 1z. More general, the algorithm is also invariant under general linear transformations R when additionally the initial covariance matrix is chosen as  .

.

Any serious parameter optimization method should be translation invariant, but most methods do not exhibit all the above described invariance properties. A prominent example with the same invariance properties is the Nelder–Mead method, where the initial simplex must be chosen respectively.

Convergence

Conceptual considerations like the scale-invariance property of the algorithm, the analysis of simpler evolution strategies, and overwhelming empirical evidence suggest that the algorithm converges on a large class of functions fast to the global optimum, denoted as x * . On some functions, convergence occurs independently of the initial conditions with probability one. On some functions the probability is smaller than one and typically depends on the initial m0 and σ0. Empirically, the fastest possible convergence rate in k for rank-based direct search methods can often be observed (depending on the context denoted as linear or log-linear or exponential convergence). Informally, we can write

for some c > 0, and more rigorously

or similarly,

This means that on average the distance to the optimum is decreased in each iteration by a "constant" factor, namely by exp( − c). The convergence rate c is roughly 0.1λ / n, given λ is not much larger than the dimension n. Even with optimal σ and C, the convergence rate c cannot largely exceed 0.25λ / n, given the above recombination weights wi are all non-negative. The actual linear dependencies in λ and n are remarkable and they are in both cases the best one can hope for in this kind of algorithm. Yet, a rigorous proof of convergence is missing.

Interpretation as Coordinate System Transformation

Using a non-identity covariance matrix for the multivariate normal distribution in evolution strategies is equivalent to a coordinate system transformation of the solution vectors,[4] mainly because the sampling equation

can be equivalently expressed in an "encoded space" as

The covariance matrix defines a bijective transformation (encoding) for all solution vectors into a space, where the sampling takes place with identity covariance matrix. Because the update equations in the CMA-ES are invariant under coordinate system transformations (general linear transformations), the CMA-ES can be re-written as an adaptive encoding procedure applied to a simple evolution strategy with identity covariance matrix.[4] This adaptive encoding procedure is not confined to algorithms that sample from a multivariate normal distribution (like evolution strategies), but can in principle be applied to any iterative search method.

Performance in Practice

In contrast to most other evolutionary algorithms, the CMA-ES is, from the users perspective, quasi parameter-free. However, the number of candidate samples λ (population size) can be adjusted by the user in order to change the characteristic search behavior (see above). CMA-ES has been empirically successful in hundreds of applications and is considered to be useful in particular on non-convex, non-separable, ill-conditioned, multi-modal or noisy objective functions. The search space dimension ranges typically between two and a few hundred. Assuming a black-box optimization scenario, where gradients are not available (or not useful) and function evaluations are the only considered cost of search, the CMA-ES method is likely to be outperformed by other methods in the following conditions:

- on low-dimensional functions, say n < 5, for example by the downhill simplex method or surrogate-based methods (like kriging with expected improvement);

- on separable functions without or with only negligible dependencies between the design variables in particular in the case of multi-modality or large dimension, for example by differential evolution;

- on (nearly) convex-quadratic functions with low or moderate condition number of the Hessian matrix, where BFGS or NEWUOA are typically ten times faster;

- on functions that can already be solved with a comparatively small number of function evaluations, say no more than 10n, where CMA-ES is often slower than, for example, NEWUOA or Multilevel Coordinate Search (MCS).

On separable functions the performance disadvantage is likely to be most significant, in that CMA-ES might not be able to find at all comparable solutions. On the other hand, on non-separable functions that are ill-conditioned or rugged or can only be solved with more than 100n function evaluations, the CMA-ES shows most often superior performance.

Variations and Extensions

The (1+1)-CMA-ES [5] generates only one candidate solution per iteration step which only becomes the new distribution mean, if it is better than the old mean. For cc = 1 it is a close variant of Gaussian adaptation. The CMA-ES has also been extended to multiobjective optimization as MO-CMA-ES .[6] Another remarkable extension has been the addition of a negative update of the covariance matrix with the so-called active CMA .[7]

See also

References

- ^ Auger, A.; N. Hansen (2005). "A Restart CMA Evolution Strategy With Increasing Population Size". 2005 IEEE Congress on Evolutionary Computation, Proceedings. IEEE. pp. 1769–1776. http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.97.8108&rep=rep1&type=pdf.

- ^ a b c Akimoto, Y.; Y. Nagata and I. Ono and S. Kobayashi (2010). "Bidirectional Relation between CMA Evolution Strategies and Natural Evolution Strategies". Parallel Problem Solving from Nature, PPSN XI. Springer. pp. 154–163.

- ^ Glasmachers, T.; T. Schaul, Y. Sun, D. Wierstra and J. Schmidhuber (2010). "Exponential Natural Evolution Strategies". Genetic and Evolutionary Computation Conference GECCO. Portland, OR. http://www.idsia.ch/~tom/publications/xnes.pdf.

- ^ a b Hansen, N. (2008). "Adpative Encoding: How to Render Search Coordinate System Invariant". Parallel Problem Solving from Nature, PPSN X. Springer. pp. 205–214. http://hal.archives-ouvertes.fr/inria-00287351/en/.

- ^ Igel, C.; T. Suttorp and N. Hansen (2006). "A Computational Efficient Covariance Matrix Update and a (1+1)-CMA for Evolution Strategies". Proceedings of the Genetic and Evolutionary Computation Conference (GECCO). ACM Press. pp. 453–460. http://www.cs.york.ac.uk/rts/docs/GECCO_2006/docs/p453.pdf.

- ^ Igel, C.; N. Hansen and S. Roth (2007). "Covariance Matrix Adaptation for Multi-objective Optimization". Evolutionary Computation (MIT press) 15 (1): 1–28. doi:10.1162/evco.2007.15.1.1. PMID 17388777. http://www.mitpressjournals.org/doi/pdfplus/10.1162/evco.2007.15.1.1.

- ^ Jastrebski, G.A.; D.V. Arnold (2006). "Improving Evolution Strategies through Active Covariance Matrix Adaptation". 2006 IEEE World Congress on Computational Intelligence, Proceedings. IEEE. pp. 9719–9726. doi:10.1109/CEC.2006.1688662.

Bibliography

- Hansen N, Ostermeier A (2001). Completely derandomized self-adaptation in evolution strategies. Evolutionary Computation, 9(2) pp. 159–195. [1]

- Hansen N, Müller SD, Koumoutsakos P (2003). Reducing the time complexity of the derandomized evolution strategy with covariance matrix adaptation (CMA-ES). Evolutionary Computation, 11(1) pp. 1–18. [2]

- Hansen N, Kern S (2004). Evaluating the CMA evolution strategy on multimodal test functions. In Xin Yao et al., editors, Parallel Problem Solving from Nature - PPSN VIII, pp. 282–291, Springer. [3]

- Igel C, Hansen N, Roth S (2007). Covariance Matrix Adaptation for Multi-objective Optimization. Evolutionary Computation, 15(1) pp. 1–28. [4]

External links

- A short introduction to CMA-ES by N. Hansen

- The CMA Evolution Strategy: A Tutorial

- CMA-ES source code page

Major subfields of optimization Convex programming · Integer programming · Quadratic programming · Nonlinear programming · Stochastic programming · Robust optimization · Combinatorial optimization · Infinite-dimensional optimization · Metaheuristics · Constraint satisfaction · Multiobjective optimizationCategories:- Evolutionary algorithms

- Stochastic optimization

- Optimization algorithms

Wikimedia Foundation. 2010.

![p_c \gets \underbrace{(1-c_c)}_{\!\!\!\!\!\text{discount factor}\!\!\!\!\!}\,

p_c +

\underbrace{\mathbf{1}_{[0,\alpha\sqrt{n}]}(\|p_\sigma\|)}_{\text{indicator function}}

\overbrace{\sqrt{1 - (1-c_c)^2}}^{

\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\text{complements for discounted variance}

\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!}

\underbrace{\sqrt{\mu_w}

\, \frac{m_{k+1} - m_k}{\sigma_k}}_{\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!

\text{distributed as}\; \mathcal{N}(0,C_k)\;\text{under neutral selection}

\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!}](c/a2cea7fe0e7beb7d2725d1c4ef5dd533.png)

![\theta = [m_k^T \mathrm{vec}(\sigma_k^2 C_k)^T]^T \in \mathbb{R}^{n+n^2}](9/f2967c0108f8e249082ca8360c72b883.png)

![F^{-1}_\theta = \left[\begin{array}{cc}\sigma_k^2 C_k&0\\ 0&2\sigma_k^2 C_k\otimes \sigma_k^2 C_k\end{array}\right]](1/9e11e2c05ae53d497afdf7b0ba7a58a6.png)

![\begin{align}

m_{k+1}

&= m_k - \underbrace{[\tilde{\nabla} \widehat{E}_\theta(f)]_{1,\dots, n}}_{

\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!

\text{natural gradient for mean}

\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!

}

\\

&= m_k + \sum_{i=1}^\lambda w_i (x_{i:\lambda} - m_k)

\end{align}](c/7bca427d99a92d46ed15a1435b99a9b6.png)

![\begin{align}

C_{k+1}

&= C_k + c_1(p_c p_c^T - C_k)

- \frac{c_\mu}{\sigma_k^2}\,\mathrm{mat}(\overbrace{[\tilde{\nabla} \widehat{E}_\theta(f)]_{n+1,\dots,n+n^2}}^{

\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!

\text{natural gradient for covariance matrix}

\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!\!

})\\

&= C_k + c_1(p_c p_c^T - C_k)

+ \frac{c_\mu}{\sigma_k^2} \sum_{i=1}^\lambda w_i \left({(x_{i:\lambda} - m_k)}{} \left({x_{i:\lambda} - m_k}{}\right)^T - \sigma_k^2 C_k\right)

\end{align}](5/bf58d41dbdbe0698685337e130ed7db0.png)