- Differential evolution

-

In computer science, differential evolution (DE) is a method that optimizes a problem by iteratively trying to improve a candidate solution with regard to a given measure of quality. Such methods are commonly known as metaheuristics as they make few or no assumptions about the problem being optimized and can search very large spaces of candidate solutions. However, metaheuristics such as DE do not guarantee an optimal solution is ever found.

DE is used for multidimensional real-valued functions but does not use the gradient of the problem being optimized, which means DE does not require for the optimization problem to be differentiable as is required by classic optimization methods such as gradient descent and quasi-newton methods. DE can therefore also be used on optimization problems that are not even continuous, are noisy, change over time, etc.

DE optimizes a problem by maintaining a population of candidate solutions and creating new candidate solutions by combining existing ones according to its simple formulae, and then keeping whichever candidate solution has the best score or fitness on the optimization problem at hand. In this way the optimization problem is treated as a black box that merely provides a measure of quality given a candidate solution and the gradient is therefore not needed.

DE is originally due to Storn and Price[1][2]. Books have been published on theoretical and practical aspects of using DE in parallel computing, multiobjective optimization, constrained optimization, and the books also contain surveys of application areas [3][4][5].

Contents

Algorithm

A basic variant of the DE algorithm works by having a population of candidate solutions (called agents). These agents are moved around in the search-space by using simple mathematical formulae to combine the positions of existing agents from the population. If the new position of an agent is an improvement it is accepted and forms part of the population, otherwise the new position is simply discarded. The process is repeated and by doing so it is hoped, but not guaranteed, that a satisfactory solution will eventually be discovered.

Formally, let

be the cost function which must be minimized or fitness function which must be maximized. The function takes a candidate solution as argument in the form of a vector of real numbers and produces a real number as output which indicates the fitness of the given candidate solution. The gradient of f is not known. The goal is to find a solution m for which

be the cost function which must be minimized or fitness function which must be maximized. The function takes a candidate solution as argument in the form of a vector of real numbers and produces a real number as output which indicates the fitness of the given candidate solution. The gradient of f is not known. The goal is to find a solution m for which  for all p in the search-space, which would mean m is the global minimum. Maximization can be performed by considering the function h: = − f instead.

for all p in the search-space, which would mean m is the global minimum. Maximization can be performed by considering the function h: = − f instead.Let

designate a candidate solution (agent) in the population. The basic DE algorithm can then be described as follows:

designate a candidate solution (agent) in the population. The basic DE algorithm can then be described as follows:- Initialize all agents

with random positions in the search-space.

with random positions in the search-space. - Until a termination criterion is met (e.g. number of iterations performed, or adequate fitness reached), repeat the following:

- For each agent

in the population do:

in the population do:

- Pick three agents

, and

, and  from the population at random, they must be distinct from each other as well as from agent

from the population at random, they must be distinct from each other as well as from agent

- Pick a random index

(n being the dimensionality of the problem to be optimized).

(n being the dimensionality of the problem to be optimized). - Compute the agent's potentially new position

![\mathbf{y} = [y_1, \ldots, y_n]](e/7def595fa659f3b234c5f1daf124cf69.png) as follows:

as follows:

- Pick a uniformly distributed number

- If ri < CR or i = R then set yi = ai + F(bi − ci) otherwise set yi = xi

- Pick a uniformly distributed number

- If

then replace the agent in the population with the improved candidate solution, that is, replace

then replace the agent in the population with the improved candidate solution, that is, replace  with

with  in the population.

in the population.

- Pick three agents

- For each agent

- Pick the agent from the population that has the highest fitness or lowest cost and return it as the best found candidate solution.

Note that

![F \in [0,2]](1/0f11bb9ae37c113b81e56275a4847ad4.png) is called the differential weight and

is called the differential weight and ![\text{CR} \in [0,1]](e/ffe24f276ade35a995b65d5d336517fe.png) is called the crossover probability, both these parameters are selectable by the practitioner along with the population size

is called the crossover probability, both these parameters are selectable by the practitioner along with the population size  see below.

see below.Parameter selection

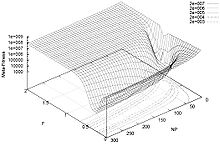

The choice of DE parameters F,CR and NP can have a large impact on optimization performance. Selecting the DE parameters that yield good performance has therefore been the subject of much research. Rules of thumb for parameter selection were devised by Storn et al.[2][3] and Liu and Lampinen [6]. Mathematical convergence analysis regarding parameter selection was done by Zaharie [7]. Meta-optimization of the DE parameters was done by Pedersen [8][9] and Zhang et al.[10].

Variants

Variants of the DE algorithm are continually being developed in an effort to improve optimization performance. Many different schemes for performing crossover and mutation of agents are possible in the basic algorithm given above, see e.g.[2]. More advanced DE variants are also being developed with a popular research trend being to perturb or adapt the DE parameters during optimization, see e.g. Price et al.[3], Liu and Lampinen [11], Qin and Suganthan [12], and Brest et al.[13].

See also

References

- ^ Storn, R.; Price, K. (1997). "Differential evolution - a simple and efficient heuristic for global optimization over continuous spaces". Journal of Global Optimization 11: 341–359. doi:10.1023/A:1008202821328.

- ^ a b c Storn, R. (1996). "On the usage of differential evolution for function optimization". Biennial Conference of the North American Fuzzy Information Processing Society (NAFIPS). pp. 519–523.

- ^ a b c Price, K.; Storn, R.M.; Lampinen, J.A. (2005). Differential Evolution: A Practical Approach to Global Optimization. Springer. ISBN 978-3-540-20950-8. http://www.springer.com/computer/theoretical+computer+science/foundations+of+computations/book/978-3-540-20950-8.

- ^ Feoktistov, V. (2006). Differential Evolution: In Search of Solutions. Springer. ISBN 978-0-387-36895-5. http://www.springer.com/mathematics/book/978-0-387-36895-5.

- ^ Chakraborty, U.K., ed. (2008), Advances in Differential Evolution, Springer, ISBN 978-3-540-68827-3, http://www.springer.com/engineering/book/978-3-540-68827-3

- ^ Liu, J.; Lampinen, J. (2002). "On setting the control parameter of the differential evolution method". Proceedings of the 8th International Conference on Soft Computing (MENDEL). Brno, Czech Republic. pp. 11–18.

- ^ Zaharie, D. (2002). "Critical values for the control parameters of differential evolution algorithms". Proceedings of the 8th International Conference on Soft Computing (MENDEL). Brno, Czech Republic. pp. 62–67.

- ^ Pedersen, M.E.H. (2010). Tuning & Simplifying Heuristical Optimization (PhD thesis). University of Southampton, School of Engineering Sciences, Computational Engineering and Design Group. http://www.hvass-labs.org/people/magnus/thesis/pedersen08thesis.pdf.

- ^ Pedersen, M.E.H. (2010). "Good parameters for differential evolution". Technical Report HL1002 (Hvass Laboratories). http://www.hvass-labs.org/people/magnus/publications/pedersen10good-de.pdf.

- ^ Zhang, X.; Jiang, X.; Scott, P.J. (2011). "A Minimax Fitting Algorithm for Ultra-Precision Aspheric Surfaces". The 13th International Conference on Metrology and Properties of Engineering Surfaces.

- ^ Liu, J.; Lampinen, J. (2005). "A fuzzy adaptive differential evolution algorithm". Soft Computing 9 (6): 448–462.

- ^ Qin, A.K.; Suganthan, P.N. (2005). "Self-adaptive differential evolution algorithm for numerical optimization". Proceedings of the IEEE congress on evolutionary computation (CEC). pp. 1785–1791.

- ^ Brest, J.; Greiner, S.; Boskovic, B.; Mernik, M.; Zumer, V. (2006). "Self-adapting control parameters in differential evolution: a comparative study on numerical benchmark functions". IEEE Transactions on Evolutionary Computation 10 (6): 646–657.

External links

- Storn's Homepage on DE featuring source-code for several programming languages.

Major subfields of optimization Convex programming · Integer programming · Quadratic programming · Nonlinear programming · Stochastic programming · Robust optimization · Combinatorial optimization · Infinite-dimensional optimization · Metaheuristics · Constraint satisfaction · Multiobjective optimizationCategories:- Optimization algorithms

- Evolutionary algorithms

- Mathematical optimization

- Operations research

- Initialize all agents

Wikimedia Foundation. 2010.