- NNPDF

-

NNPDF is used to denote the parton distribution functions from the NNPDF Collaboration.

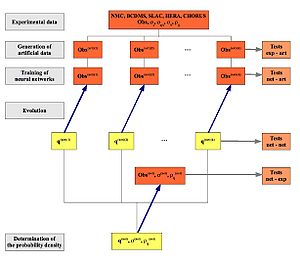

The NNPDF approach can be divided into four main steps

- The generation of a large (Nrep = O(1000)) sample of Monte Carlo replicas of the original experimental data, in a way that central values, errors and correlations are reproduced with enough accuracy.

- The training (minimization of the χ2) of a set of PDFs parametrized by neural networks on each of the above MC replicas of the data. PDFs are parametrized at the initial evolution scale

and then evolved to the experimental data scale Q2 by means of the DGLAP equations. Since the PDF parametrization is redundant, the minimization strategy is based in genetic algorithms

and then evolved to the experimental data scale Q2 by means of the DGLAP equations. Since the PDF parametrization is redundant, the minimization strategy is based in genetic algorithms - The neural net training is stopped dynamically before entering into the overlearning regime, that is, so that the PDFs learn the physical laws which underlie experimental data without fitting simultaneously statistical noise

- Once the training of the MC replicas has been completed, a set of statistical estimators can be applied to the set of PDFs, in order to assess the statistical consistency of our results. For example, the stability with respect PDF parametrization can be explicitly verified.

The set of Nrep PDF sets (trained neural networks) provides a representation of the underlying PDF probability density, from which any statistical estimator can be computed

The NNPDF Collaboration strategy is summarized in this diagram:

The image below shows the gluon at small-x from the the NNPDF1.0 analysis, available through the LHAPDF interface

NNPDF Parton Distributions

- NNPDF1.0 - arxiv:0808.1231

- NNPDF1.1

- NNPDF1.2

- NNPDF2.0: A global analysis of all relevant hard scattering data: DIS, Drell-Yan, vector boson production and inclusive jets

External links

Categories:

Wikimedia Foundation. 2010.