- Object recognition (computer vision)

-

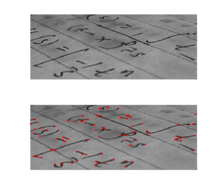

Feature detection  Output of a typical corner detection algorithm

Output of a typical corner detection algorithmEdge detection Canny · Canny-Deriche · Differential · Sobel · Prewitt · Roberts Cross Interest point detection Corner detection Harris operator · Shi and Tomasi · Level curve curvature · SUSAN · FAST Blob detection Laplacian of Gaussian (LoG) · Difference of Gaussians (DoG) · Determinant of Hessian (DoH) · Maximally stable extremal regions · PCBR Ridge detection Hough transform Structure tensor Affine invariant feature detection Affine shape adaptation · Harris affine · Hessian affine Feature description SIFT · SURF · GLOH · HOG · LESH Scale-space Scale-space axioms · Implementation details · Pyramids Object recognition in computer vision is the task of finding a given object in an image or video sequence. Humans recognize a multitude of objects in images with little effort, despite the fact that the image of the objects may vary somewhat in different view points, in many different sizes / scale or even when they are translated or rotated. Objects can even be recognized when they are partially obstructed from view. This task is still a challenge for computer vision systems in general.

Contents

Approaches based on CAD-like object models

Edge detection, primal sketch, Marr, Mohan and Nevatia, Lowe, Faugeras

Recognition by parts

Binford (generalized cylinders), Biederman (geons), Dickinson, Forsyth and Ponce

Appearance-based methods

- Use example images (called templates or exemplars) of the objects to perform recognition

- Objects look different under varying conditions:

-

- Changes in lighting or color

- Changes in viewing direction

- Changes in size / shape

- A single exemplar is unlikely to succeed reliably. However, it is impossible to represent all appearances of an object

1. Edge matching

- • Uses edge detection techniques, such as the Canny edge detection, to find edges.

- • Changes in lighting and color usually don’t have much effect on image edges

- • Strategy:

-

- Detect edges in template and image

- Compare edges images to find the template

- Must consider range of possible template positions

-

- • Measurements:

-

- Good – count the number of overlapping edges. Not robust to changes in shape

-

-

-

- Better – count the number of template edge pixels with some distance of an edge in the search image

-

-

-

- Best – determine probability distribution of distance to nearest edge in search image (if template at correct position). Estimate likelihood of each template position generating image

-

2. Divide-and-Conquer search

- • Strategy:

-

- Consider all positions as a set (a cell in the space of positions)

- Determine lower bound on score at best position in cell

- If bound is too large, prune cell

- If bound is not too large, divide cell into subcells and try each subcell recursively

- Process stops when cell is “small enough”

-

- • Unlike multi-resolution search, this technique is guaranteed to find all matches that meet the criterion (assuming that the lower bound is accurate)

- • Finding the Bound:

-

- To find the lower bound on the best score, look at score for the template position represented by the center of the cell

- Subtract maximum change from the “center” position for any other position in cell (occurs at cell corners)

-

- • Complexities arise from determining bounds on distance

3. Greyscale matching

- • Edges are (mostly) robust to illumination changes, however they throw away a lot of information

- • Must compute pixel distance as a function of both pixel position and pixel intensity

- • Can be applied to color also

4. Gradient matching

- • Another way to be robust to illumination changes without throwing away as much information is to compare image gradients

- • Matching is performed like matching greyscale images

- • Simple alternative: Use (normalized) correlation

5. Large modelbases

- • One approach to efficiently searching the database for a specific image to use eigenvectors of the templates (called eigenfaces)

- • Modelbases are a collection of geometric models of the objects that should be recognised

Feature-based methods

- a search is used to find feasible matches between object features and image features.

- the primary constraint is that a single position of the object must account for all of the feasible matches.

- methods that extract features from the objects to be recognized and the images to be searched.

-

- surface patches

- corners

- linear edges

1. Interpretation trees

- • A method for searching for feasible matches, is to search through a tree.

- • Each node in the tree represents a set of matches.

-

- Root node represents empty set

- Each other node is the union of the matches in the parent node and one additional match.

- Wildcard is used for features with no match

-

- • Nodes are “pruned” when the set of matches is infeasible.

-

- A pruned node has no children

-

- • Historically significant and still used, but less commonly

2. Hypothesize and test

- • General Idea:

-

- Hypothesize a correspondence between a collection of image features and a collection of object features

- Then use this to generate a hyponthesis about the projection from the object coordinate frame to the image frame

- Use this projection hypothesis to generate a rendering of the object. This step is usually known as backprojection

- Compare the rendering to the image, and, if the two are sufficiently similar, accept the hypothesis

-

- • Obtaining Hypothesis:

-

- There are a variety of different ways of generating hypotheses.

- When camera intrinsic parameters are known, the hypothesis is equivalent to a hypothetical position and orientation – pose – for the object.

- Utilize geometric constraints

- Construct a correspondence for small sets of object features to every correctly sized subset of image points. (These are the hypotheses)

-

- • Three basic approaches:

-

- Obtaining Hypotheses by Pose Consistency

- Obtaining Hypotheses by Pose Clustering

- Obtaining Hypotheses by Using Invariants

-

- • Expense search that is also redundant, but can be improved using Randomization and/or Grouping

-

- Randomization

- § Examining small sets of image features until likelihood of missing object becomes small

- § For each set of image features, all possible matching sets of model features must be considered.

- § Formula:

-

-

-

-

-

- ( 1 – Wc)k = Z

-

-

-

-

-

-

-

- W = the fraction of image points that are “good” (w ~ m/n)

- c = the number of correspondences necessary

- k = the number of trials

- Z = the probability of every trial using one (or more) incorrect correspondences

-

-

-

-

-

- Grouping

- § If we can determine groups of points that are likely to come from the same object, we can reduce the number of hypotheses that need to be examined

-

3. Pose consistency

- • Also called Alignment, since the object is being aligned to the image

- • Correspondences between image features and model features are not independent – Geometric constraints

- • A small number of correspondences yields the object position – the others must be consistent with this

- • General Idea:

-

- If we hypothesize a match between a sufficiently large group of image features and a sufficiently large group of object features, then we can recover the missing camera parameters from this hypothesis (and so render the rest of the object)

-

- • Strategy:

-

- Generate hypotheses using small number of correspondences (e.g. triples of points for 3D recognition)

- Project other model features into image (backproject) and verify additional correspondences

-

- • Use the smallest number of correspondences necessary to achieve discrete object poses

4. Pose clustering

- • General Idea:

-

- Each object leads to many correct sets of correspondences, each of which has (roughly) the same pose

- Vote on pose. Use an accumulator array that represents pose space for each object

- This is essentially a Hough transform

-

- • Strategy:

-

- For each object, set up an accumulator array that represents pose space – each element in the accumulator array corresponds to a “bucket” in pose space.

- Then take each image frame group, and hypothesize a correspondence between it and every frame group on every object

- For each of these correspondences, determine pose parameters and make an entry in the accumulator array for the current object at the pose value.

- If there are large numbers of votes in any object’s accumulator array, this can be interpreted as evidence for the presence of that object at that pose.

- The evidence can be checked using a verification method

-

- • Note that this method uses sets of correspondences, rather than individual correspondences

-

- Implementation is easier, since each set yields a small number of possible object poses.

-

- • Improvement

-

-

- The noise resistance of this method can be improved by not counting votes for objects at poses where the vote is obviously unreliable

- § For example, in cases where, if the object was at that pose, the object frame group would be invisible.

- These improvements are sufficient to yield working systems

-

5. Invariance

- • There are geometric properties that are invariant to camera transformations

- • Most easily developed for images of planar objects, but can be applied to other cases as well

- • An algorithm that uses geometric invariants to vote for object hypotheses

- • Similar to pose clustering, however instead of voting on pose, we are now voting on geometry

- • A technique originally developed for matching geometric features (uncalibrated affine views of plane models) against a database of such features

- • Widely used for pattern-matching, CAD/CAM, and medical imaging.

- • It is difficult to choose the size of the buckets

- • It is hard to be sure what “enough” means. Therefore there my be some danger that the table will get clogged.

7. Scale-invariant feature transform (SIFT)

- • Keypoints of objects are first extracted from a set of reference images and stored in a database

- • An object is recognized in a new image by individually comparing each feature from the new image to this database and finding candidate matching features based on Euclidean distance of their feature vectors.

8. Speeded Up Robust Features (SURF)

- • A robust image detector & descriptor

- • The standard version is several times faster than SIFT and claimed by its authors to be more robust against different image transformations than SIFT

- • Based on sums of approximated 2D Haar wavelet responses and made efficient use of integral images.

Bag of words representations

See also: Bag of words model in computer visionOther approaches

Template matching, gradient histograms, intraclass transfer learning, explicit and implicit 3D object models, global scene representations, shading, reflectance, texture, grammars, topic models, biologically inspired object recognition[1]

Window-based detection, 3D cues, context, leveraging internet data, unsupervised learning, fast indexing[2]

Applications

Object recognition methods has the following applications:

- Image panoramas[3]

- Image watermarking[4]

- Global robot localization[5]

- Face Detection [6]

- Optical Character Recognition [7]

- Manufacturing Quality Control [8]

- Content-Based Image Indexing [9]

- Object Counting and Monitoring [10]

- Automated vehicle parking systems [11]

- Visual Positioning and tracking [12]

- Video Stabilization [13]

Surveys

Daniilides and Eklundh, Edelman

See also

- 3D single object recognition

- Scale-invariant feature transform (SIFT)

- SURF

- Histogram of oriented gradients

- Boosting methods for object categorization

- Bag of words model in computer vision

Notes

- ^ 6.870 Object Recognition and Scene Understanding

- ^ CS395T: Visual Recognition and Search

- ^ Brown, M., and Lowe, D.G., "Recognising Panoramas," ICCV, p. 1218, Ninth IEEE International Conference on Computer Vision (ICCV'03) - Volume 2, Nice,France, 2003

- ^ Li, L., Guo, B., and Shao, K., " Geometrically robust image watermarking using scale-invariant feature transform and Zernike moments," Chinese Optics Letters, Volume 5, Issue 6, pp. 332-335, 2007.

- ^ Se,S., Lowe, D.G., and Little, J.J.,"Vision-based global localization and mapping for mobile robots", IEEE Transactions on Robotics, 21, 3 (2005), pp. 364-375.

- ^ Thomas Serre, Maximillian Riesenhuber, Jennifer Louie, Tomaso Poggio, "On the Role of Object-Specific features for Real World Object Recognition in Biological Vision." Artificial Intelligence Lab, and Department of Brain and Cognitive Sciences, Massachusetts Institute of Technology, Center for Biological and Computational Learning, Mc Govern Institute for Brain Research, Cambridge, MA, USA [1]

- ^ Anne Permaloff and Carl Grafton, "Optical Character Recognition," Political Science and Politics, Vol. 25, No. 3 (Sep., 1992), pp. 523-531 [2]

- ^ Christian Demant, Bernd Streicher-Abel, Peter Waszkewitz, "Industrial image processing: visual quality control in manufacturing" [3]

- ^ Nuno Vasconcelos "Image Indexing with Mixture Hierarchies," Compaq Computer Corporation, Proc. IEEE Conference in Computer Vision and Pattern Recognition, Kauai, Hawaii, 2001 [4]

- ^ Janne Heikkila, Olli Silven, "A real-time system for monitoring of cyclists and pedestrians", Image and Vision Computing, Volume 22, Issue 7, Visual Surveillance, 1 July 2004, Pages 563-570, ISSN 0262-8856 [5]

- ^ Ho Gi Jung, Dong Suk Kim, Pal Joo Yoon, Jaihie Kim, "Structure Analysis Based Parking Slot Marking Recognition for Semi-automatic Parking System" Structural, Syntactic, and Statistical Pattern Recognition, Springer Berlin / Heidelberg, 2006 [6]

- ^ S. K. Nayar, H. Murase, and S.A. Nene, "Learning, Positioning, and tracking Visual appearance," Proc. Of IEEE Intl. Conf. on Robotics and Automation, San Diego, May 1994. [7]

- ^ Liu, F., Gleicher, M., Jin, H., and Agarwala, A. 2009. Content-preserving warps for 3D video stabilization. ACM Trans. Graph. 28, 3 (Jul. 2009), 1-9. DOI= http://doi.acm.org/10.1145/1531326.1531350

References

- Elgammal, Ahmed "CS 534: Computer Vision 3D Model-based recognition", Dept of Computer Science, Rutgers University;

- Hartley, Richard and Zisserman, Andrew "Multiple View Geometry in computer vision", Cambridge Press, 2000, ISBN-0-521-62304-9.

- Roth, Peter M. and Winter, Martin “Survey of Appearance-Based Methods for Object Recognition”, Technical Report ICG-TR-01/08, Inst. for Computer Graphics and Vision, Graz University of Technology, Austria; January 15, 2008.

- Collins, Robert "Lecture 31: Object Recognition: SIFT Keys", CSE486, Penn State

Categories:- Computer vision

- Object recognition and categorization

-

Wikimedia Foundation. 2010.