- Mixture distribution

-

See also: Mixture model

In probability and statistics, a mixture distribution is the probability distribution of a random variable whose values can be interpreted as being derived in a simple way from an underlying set of other random variables. In particular, the final outcome value is selected at random from among the underlying values, with a certain probability of selection being associated with each. Here the underlying random variables may be random vectors, each having the same dimension, in which case the mixture distribution is a multivariate distribution.

In cases where each of the underlying random variables is continuous, the outcome variable will also be continuous and its probability density function is sometimes referred to as a mixture density. The cumulative distribution function (and the probability density function if it exists) can be expressed as a convex combination (i.e. a weighted sum, with non-negative weights that sum to 1) of other distribution functions and density functions. The individual distributions that are combined to form the mixture distribution are called the mixture components, and the probabilities (or weights) associated with each component are called the mixture weights. The number of components in mixture distribution is often restricted to being finite, although in some cases the components may be countable. More general cases (i.e. an uncountable set of component distributions), as well as the countable case, are treated under the title of compound distributions.

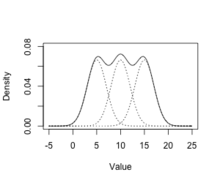

A distinction needs to be made between a random variable whose distribution function or density is the sum of a set of components (i.e a mixture distribution) and a random variable whose value is the sum of the values of two or more underlying random variables, in which case the distribution is given by the convolution operator. As an example, the sum of two normally-distributed random variables, each with different means, will still be a normal distribution. On the other hand, a mixture density created as a mixture of two normal distributions with different means will have two peaks provided that the two means are far enough apart, showing that this distribution is radically different from a normal distribution.

Mixture distributions arise in many contexts in the literature and arise naturally where a statistical population contains two or more sub-populations. They are also sometimes used as a means of representing non-normal distributions. Data analysis concerning statistical models involving mixture distributions is discussed under the title of mixture models, while the present article concentrates on simple probabilistic and statistical properties of mixture distributions and how these relate to properties of the underlying distributions.

Contents

Finite and countable mixtures

Given a finite set of probability density functions p1(x), …, pn(x), or corresponding cumulative distribution functions P1(x), …, Pn(x) and weights w1, …, wn such that wi ≥ 0 and ∑wi = 1, the mixture distribution can be represented by writing either the density, f, or the distribution function, F, as a sum (which in both cases is a convex combination):

This type of mixture, being a finite sum, is called a finite mixture, and in applications, an unqualified reference to a "mixture density" usually means a finite mixture. The case of a countable set of components is covered formally by allowing n=∞.

Uncountable mixtures

Main article: compound distributionWhere the set of component distributions is uncountable, the result is often called a compound probability distribution. The construction of such distributions has a formal similarity to that of mixture distributions, with either infinite summations or integrals replacing the finite summations used for finite mixtures.

Consider a probability density function p(x;a) for a variable x, parameterized by a. That is, for each value of a in some set A, p(x;a) is a probability density function with respect to x. Given a probability density function w (meaning that w is nonnegative and integrates to 1), the function

is again a probability density function for x. A similar integral can be written for the cumulative distribution function. Note that the formulae here reduce to the case of a finite or infinite mixture if the density w is allowed to be a generalised function representing the "derivative" of the cumulative distribution function of a discrete distribution.

Mixtures of parametric families

The mixture components are often not arbitrary probability distributions, but instead are members of a parametric family (such as normal distributions), with different values for a parameter or parameters. In such cases, assuming that it exists, the density can be written in the form of a sum as:

for one parameter, or

for two parameters, and so forth.

Properties

Convexity

A general linear combination of probability density functions is not necessarily a probability density, since it may be negative or it may integrate to something other than 1. However, a convex combination of probability density functions preserves both of these properties (non-negativity and integrating to 1), and thus mixture densities are themselves probability density functions.

Moments

Let X1, ..., Xn denote random variables from the n component distributions, and let X denote a random variable from the mixture distribution. Then, for any function H(·) for which

![\operatorname{E}[H(X_i)]](b/e7bc2f1d82a91ddfb0cc8fa4a992e867.png) exists, and assuming that the component densities pi(x) exist,

exists, and assuming that the component densities pi(x) exist,The relation,

holds more generally.

It is a trivial matter to note that the jth moment about zero (i.e. choosing H(x) = xj) is simply a weighted average of the jth moments of the components. Moments about the mean H(x) = (x − μ)j involve a binomial expansion:[1]

where μi denotes the mean of the ith component. In case of a mixture of one-dimensional normal distributions with weights wi, means μi and variances σi2, the total mean and variance will be:

These relations highlight the potential of mixture distributions to display non-trivial higher-order moments such as skewness and kurtosis (fat tails) and multi-modality, even in the absence of such features within the components themselves. Marron and Wand (1992) give an illustrative account of the flexibility of this framework.

Modes

The question of multimodality is simple for some cases, such as mixtures of exponential distributions: all such mixtures are unimodal.[2] However, for the case of mixtures of normal distributions, it is a complex one. Conditions for the number of modes in a multivariate normal mixture are explored by Ray and Lindsay[3] extending the earlier work on univariate (Robertson and Fryer, 1969; Behboodian, 1970)[Full citation needed] and multivariate distributions (Carreira-Perpinan and Williams, 2003)[Full citation needed].

Here the problem of evaluation of the modes of a n component mixture in a D dimensional space is reduced to identification of critical points (local minima, maxima and saddle points) on a manifold referred to as the ridgeline surface

where α belongs to the n − 1 dimensional unit simplex

![\mathcal{S}_n =

\{ \alpha \in \mathbb{R}^n: \alpha_i \in [0,1], \sum_{i=1}^n \alpha_i = 1 \}](8/ca84f3378f266f6e0837cc946f7d6d94.png) and Σi ∈ RD × D, μi ∈ RD correspond to the covariance and mean of the ith component. Ray and Lindsay[citation needed] consider the case in which n − 1 < D showing a one-to-one correspondence of modes of the mixture and those on the elevation function h(α) = q(x*(α)) thus one may identify the modes by solving

and Σi ∈ RD × D, μi ∈ RD correspond to the covariance and mean of the ith component. Ray and Lindsay[citation needed] consider the case in which n − 1 < D showing a one-to-one correspondence of modes of the mixture and those on the elevation function h(α) = q(x*(α)) thus one may identify the modes by solving  with respect to α and determining the value x*(α).

with respect to α and determining the value x*(α).Using graphical tools, the potential multi-modality of n = {2, 3} mixtures is demonstrated; in particular it is shown that the number of modes may exceed n and that the modes may not be coincident with the component means. For two components they develop a graphical tool for analysis by instead solving the aforementioned differential with respect to w1 and expressing the solutions as a function Π(α), α ∈ [0, 1] so that the number and location of modes for a given value of w1 corresponds to the number of intersections of the graph on the line Π(α) = w1. This in turn can be related to the number of oscillations of the graph and therefore to solutions of

leading to an explicit solution for a two component homoscedastic mixture given by

leading to an explicit solution for a two component homoscedastic mixture given by- 1 − α(1 − α)dM(μ1,μ2,Σ)2

where dM(μ1, μ2, Σ) = (μ2 − μ1)TΣ−1(μ2 − μ1) is the Mahalanobis distance.

Since the above is quadratic it follows that in this instance there are at most two modes irrespective of the dimension or the weights.

Applications

For more details on this topic, see Mixture model.Mixture densities express complex densities (mixture densities) in terms of simpler densities (the mixture components), and are used both because they provide a good model for certain data sets (where different subsets of the data exhibit different characteristics and can best be modeled separately), and because they can be more mathematically tractable, because the individual mixture components can be more easily studied than the overall mixture density.

Mixture densities can be used used to model a statistical population with subpopulations, where the mixture components are the densities on the subpopulations, and the weights are the proportion of each subpopulation in the overall population.

Mixture densities can also be used to model experimental error or contamination – one assumes that most of the samples measure the desired phenomenon,

Parametric statistics that assume no error often fail on such mixture densities – for example, statistics that assume normality often fail disastrously in the presence of even a few outliers – and instead one uses robust statistics.

See also

Mixture

Hierarchical models

Notes

References

- Frühwirth-Schnatter, Sylvia (2006), Finite Mixture and Markov Switching Models, Springer, ISBN 978-1-4419-2194-9

Categories:- Probability distributions

- Systems of probability distributions

Wikimedia Foundation. 2010.

![\begin{align}

\operatorname{E}[H(X)] & = \int_{-\infty}^\infty H(x) \sum_{i = 1}^n w_i p_i(x) \, dx \\

& = \sum_{i = 1}^n w_i \int_{-\infty}^\infty p_i(x) H(x) \, dx = \sum_{i = 1}^n w_i \operatorname{E}[H(X_i)].

\end{align}](f/5df97765d4b507c82a8ac65d0ac67e47.png)

![\operatorname{E}[H(X)] = \sum_{i = 1}^n w_i \operatorname{E}[H(X_i)],](8/488c9e3ed16e8128b492e778279a21ed.png)

![\begin{align}

\operatorname{E}[(X - \mu)^j] & = \sum_{i = 1}^n w_i \operatorname{E}[(X_i - \mu_i + \mu_i - \mu)^j] \\

& = \sum_{i=1}^n \sum_{k=0}^j \left( \begin{array}{c} j \\ k \end{array} \right) (\mu_i - \mu)^{j-k} w_i \operatorname{E}[(X_i- \mu_i)^k],

\end{align}](e/20e5825b5e84baeb1bb107d70ac46c44.png)

![\operatorname{E}[X] = \mu = \sum_{i = 1}^n w_i \mu_i ,](3/9132a8869861a987e64e71473864f364.png)

![\operatorname{E}[(X - \mu)^2] = \sigma^2 = \sum_{i = 1}^n w_i (\mu_i^2 + \sigma_i^2) - \mu^2 .](f/81f15573a463dc0c414c4395a845dc58.png)

![x^{*}(\alpha) = \left[ \sum_{i=1}^{n} \alpha_i \Sigma_i^{-1} \right]^{-1} \times \left[ \sum_{i=1}^{n} \alpha_i \Sigma_i^{-1} \mu_i \right]^{-1}](7/3976cc89176b47e1ad902dcd3c801ce0.png)